graph TD

A[Hypothesis Testing] --> B[The Concept of<br/>Hypothesis Testing]

A --> C[Hypothesis Testing for<br/>the Population Mean]

A --> D[Tests for π]

B --> B1[Critical Values of Z<br/>and Rejection Regions]

B --> B2[Error Probability]

B --> B3[Formulation of the<br/>Decision Rule]

C --> C1[One-tailed and<br/>Two-tailed Tests]

C --> C2[p-value]

C --> C3[Tests for μ,<br/>Small Samples]

D --> D1[One-tailed and<br/>Two-tailed Tests]

D --> D2[p-value]

style A fill:#333,stroke:#000,stroke-width:4px,color:#fff

style B fill:#fff,stroke:#000,stroke-width:2px

style C fill:#fff,stroke:#000,stroke-width:2px

style D fill:#fff,stroke:#000,stroke-width:2px

9 Hypothesis Testing

9.1 Opening Scenario: Banking Deregulation and Strategic Decision-Making

First Bank of America Corporate Boardroom, Chicago

The polished mahogany table reflected the morning light as Lawrence Hopkins, Manager of Customer Relations Division, spread his analysis documents before the executive committee. The room fell silent.

“Ladies and gentlemen,” Hopkins began, his voice steady despite the weight of the moment, “we’re facing the most significant strategic decision in our bank’s history. Following our merger with Great Lakes National and the ongoing deregulation of the banking industry, we must determine whether our assumptions about customer behavior, deposit patterns, and market position are statistically valid—or merely wishful thinking.”

He clicked to display the first slide: a graph showing First Bank’s market share rising to 55% in Q4 1998, substantially ahead of competitors Magna Bank and City National.

“The question isn’t whether we’re doing well,” Hopkins continued. “The question is: can we prove it with statistical certainty? Our plans to increase fees based on average daily balances, to modify service offerings, and to pursue aggressive expansion all hinge on hypotheses we must test rigorously.”

A senior vice president leaned forward. “Lawrence, what specific claims are we testing?”

Hopkins nodded. “Excellent question. Here are our key hypotheses:

Deposit Hypothesis: We claim average customer deposits have been increasing and now exceed $312 per account. Can we prove this isn’t just sampling variation?

Market Share Hypothesis: We assert that our market share is exactly 55%. But with daily fluctuations, how confident are we?

Fee Sensitivity Hypothesis: We believe that fewer than 65% of customers will object to a $2 monthly fee for returned checks. If we’re wrong, we’ll face significant customer attrition.

Business Account Hypothesis: We claim commercial accounts average at least $340,000. This determines whether we establish a separate commercial banking division—a multi-million dollar investment.”

The Chief Financial Officer interjected: “What happens if our hypotheses are wrong?”

“Exactly the risk we’re managing,” Hopkins responded. “In hypothesis testing, we face two types of errors:

Type I Error: We reject a true hypothesis—perhaps we decide deposits haven’t increased when they actually have, causing us to miss a growth opportunity.

Type II Error: We fail to reject a false hypothesis—perhaps we implement the fee increase believing customers will tolerate it, when in reality they won’t, leading to massive account closures.”

He paused for effect. “The statistical tools we’re about to employ—critical values, rejection regions, significance levels, and p-values—aren’t academic exercises. They’re risk management instruments that will guide decisions affecting hundreds of millions of dollars.”

This chapter explores the framework Hopkins and his team will use: hypothesis testing. Unlike confidence intervals that estimate unknown parameters, hypothesis testing evaluates claims about populations and determines whether sample evidence supports or refutes those claims. The consequences of these statistical decisions ripple through every aspect of business strategy, from pricing policies to market expansion, from product development to quality control.

Learning Objectives:

After completing this chapter, you will be able to:

- Formulate null and alternative hypotheses for business decision scenarios

- Calculate test statistics (Z and t) and compare them to critical values

- Interpret rejection regions and make decisions based on sample evidence

- Distinguish between Type I and Type II errors and their business implications

- Understand and apply significance levels (α values) appropriately

- Conduct two-tailed and one-tailed hypothesis tests for population means

- Calculate and interpret p-values for hypothesis tests

- Apply hypothesis testing to population proportions

- Use t-distribution for small sample hypothesis tests

- Make data-driven business recommendations based on statistical evidence

9.2 8.1 Introduction: The Role of Hypothesis Testing in Decision-Making

The purpose of statistical analysis is to reduce uncertainty in decision-making. Managers make better decisions when they have sufficient information at their disposal. Hypothesis testing is an exceptionally effective analytical tool for obtaining valuable information under a wide variety of circumstances.

Consider these common business examples:

Quality Control: A soft drink bottler must determine whether the average weight of bottle contents is 16 ounces (μ = 16 ounces).

Defect Management: A computer software producer wishes to certify that the proportion of defective products is less than 3% (π < 0.03).

Cost Reduction: A sports equipment manufacturer wants to know whether there is evidence that a production process has reduced average production costs below the current level of $5 per unit (μ < 5).

These illustrations are virtually unlimited in business settings. If answers to these questions—and many others—can be obtained with some degree of assurance, decision-making becomes more confident and is less likely to lead to costly errors.

9.2.1 The Logic of Hypothesis Testing

Hypothesis testing operates on a fundamental principle: we make an assumption about a population parameter, collect sample evidence, and then determine whether that evidence is consistent with our assumption or contradicts it strongly enough to reject the assumption.

Let’s walk through the conceptual framework with a concrete example.

Example: The Soft Drink Bottler’s Dilemma

A bottling company fills bottles that should contain 16 ounces of beverage. The production manager needs to verify this claim. The manager might:

- Assume the bottles contain an average of 16 ounces (μ = 16)

- Collect a sample of bottles and measure their contents

- Calculate how unusual the sample result would be if the assumption were true

- Decide whether to maintain or reject the assumption based on the evidence

But here’s the critical question: How different must the sample mean be from 16 ounces before we conclude the population mean isn’t 16?

If a sample of bottles averages 16.15 ounces, should we conclude μ ≠ 16? Probably not. This small difference could easily result from random sampling error—due to chance, some bottles in the sample might be slightly fuller, producing a sample mean that modestly overestimates the population mean.

However, if the sample averages 17.5 ounces, we’d have much stronger evidence that something is wrong with the filling process.

Hypothesis testing provides a formal, probability-based framework for making this distinction between “acceptable variation” and “statistically significant difference.”

9.3 8.2 The Concept of Hypothesis Testing: Null and Alternative Hypotheses

9.3.1 Formulating Hypotheses

To conduct a hypothesis test, we make some inference or assumption about the population. The soft drink bottler cited earlier might assume or hypothesize that the average content is 16 ounces (μ = 16). This becomes the null hypothesis (H₀).

The null hypothesis is tested against the alternative hypothesis (Hₐ), which states the opposite. In this case, the average content is not 16 ounces (μ ≠ 16).

Therefore, we would have:

H_0: \mu = 16 \quad H_A: \mu \neq 16

Understanding the Term “Null”

The term “null” implies nothing or no effect. The term arose from early agricultural researchers who tested the effectiveness of new fertilizers to determine their impact on crop yields. They assumed the fertilizer made no difference in yield until it proved to produce an effect.

Critical convention: The null hypothesis traditionally contains some reference to an equality sign: “=”, “≥”, or “≤”. We’ll explore this more fully when discussing one-tailed tests.

9.3.2 The Presumption of Innocence: Never “Accepting” the Null Hypothesis

Based on sample data, the null hypothesis is either rejected or not rejected. We can never “accept” the null hypothesis as true.

Not rejecting the null hypothesis simply means the sample evidence isn’t strong enough to lead to its rejection.

Even if the sample mean X̄ = 16 exactly, this doesn’t prove that μ = 16. It could be that μ is actually 15.8 (or any other number), and due to sampling error, the sample mean just happened to equal 16.

Legal Analogy: Testing a hypothesis is like putting a person on trial. The defendant is found either guilty or not guilty. A verdict of “innocent” is never rendered. A not guilty verdict simply means the evidence isn’t strong enough to find the defendant guilty—it doesn’t mean the person is actually innocent.

Statistical Burden of Proof: When conducting a hypothesis test, the null hypothesis is presumed “innocent” (true) until a preponderance of evidence indicates it is “guilty” (false). Just as in a legal setting, evidence of guilt must be established beyond reasonable doubt. Before we reject the null hypothesis, the sample mean must differ significantly from the hypothesized population mean—the evidence must be very convincing and conclusive.

A conclusion based on rejection of the null hypothesis is more definitive than one ending in a decision not to reject. Rejecting H₀ means the sample evidence is overwhelmingly inconsistent with the hypothesis. Not rejecting H₀ means the evidence is insufficient to conclude otherwise—it doesn’t prove the hypothesis is correct.

9.3.3 Statistical Significance vs. Practical Insignificance

Suppose we sample n bottles and find a mean of X̄ = 16.15 ounces. Can we conclude the population mean isn’t 16? After all, 16.15 is not 16!

Probably not. This small difference could be statistically insignificant because it could be easily explained as simple sampling error. Due to chance, some bottles in the sample might be slightly fuller, producing a sample mean that modestly overestimates the population mean.

The sample evidence that X̄ = 16.15 isn’t strong enough to trigger rejection of the null hypothesis that μ = 16.

The Central Question: If the difference between the hypothesized value of 16 and the sample finding of 16.15 is insufficient to reject the null hypothesis, then how large must the difference be to be statistically significant and lead to rejection?

This question leads us to the Z-transformation and the concept of critical values.

9.4 8.3 Critical Values of Z and Rejection Regions

9.4.1 The Z-Transformation

Recall from our discussion of sampling distributions that we can transform any unit of measurement (such as the bottler’s ounces) into corresponding Z-values using the Z-formula:

Z = \frac{\bar{X} - \mu}{\sigma_{\bar{x}}} = \frac{\bar{X} - \mu}{\frac{\sigma}{\sqrt{n}}}

When σ is unknown, we use the sample standard deviation s:

Z = \frac{\bar{X} - \mu}{\frac{s}{\sqrt{n}}}

The resulting normal distribution of Z-values has a mean of zero and a standard deviation of one.

9.4.2 Establishing Critical Values and Rejection Regions

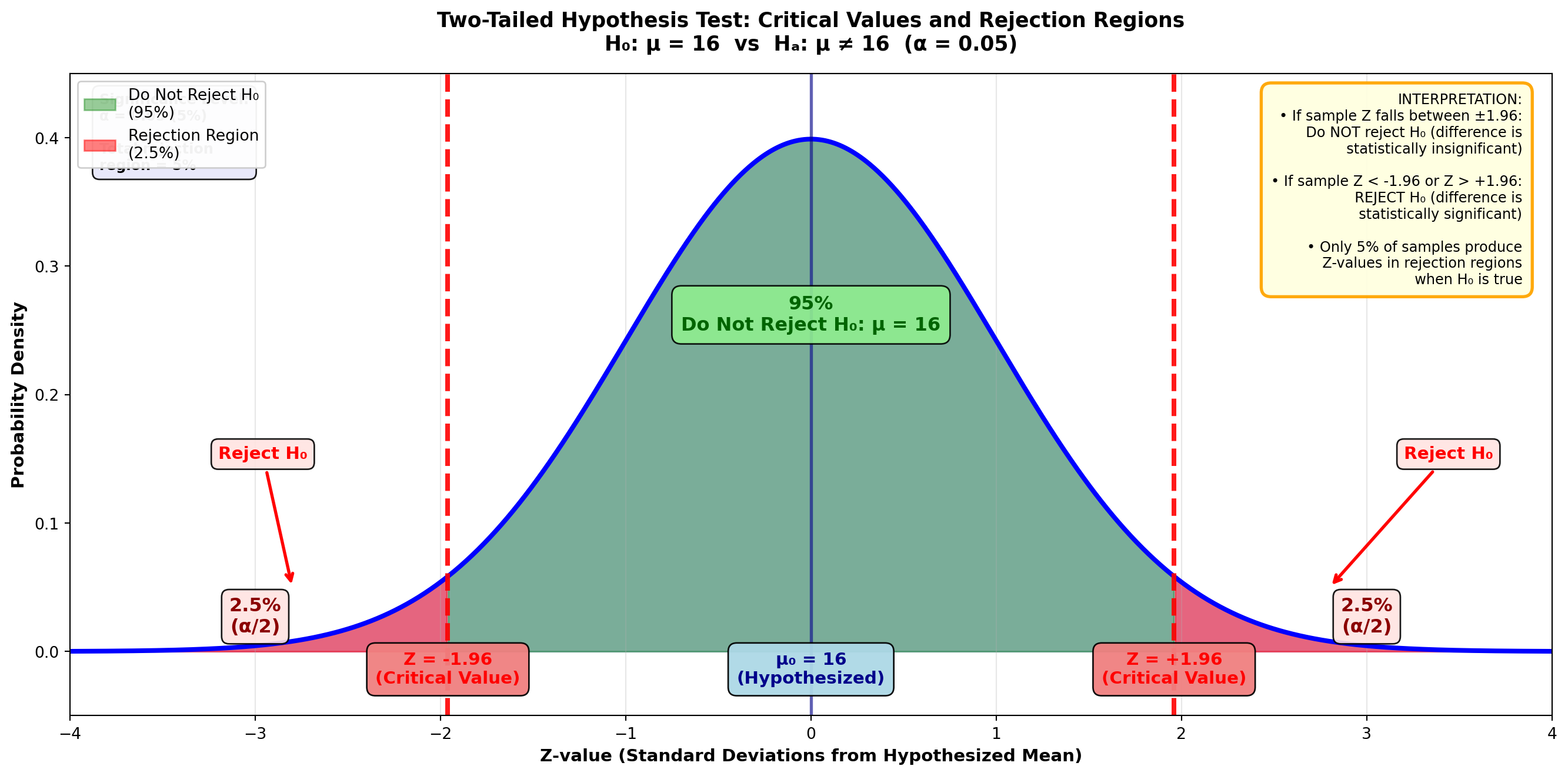

The Empirical Rule tells us that 95% of all sample means (X̄’s) in the sampling distribution are within 1.96 standard errors of the unknown population mean, as shown in Figure 8.1.

Figure 8.1: Critical Values of Z and Rejection Regions (Two-Tailed Test, α = 0.05)

Code

import matplotlib.pyplot as plt

import numpy as np

from scipy import stats

fig, ax = plt.subplots(figsize=(14, 7))

# Generate standard normal distribution

x = np.linspace(-4, 4, 1000)

y = stats.norm.pdf(x, 0, 1)

# Plot the distribution

ax.plot(x, y, 'b-', linewidth=3)

ax.fill_between(x, y, alpha=0.2, color='blue')

# Shade the non-rejection region (middle 95%)

x_middle = x[(x >= -1.96) & (x <= 1.96)]

y_middle = stats.norm.pdf(x_middle, 0, 1)

ax.fill_between(x_middle, y_middle, alpha=0.4, color='green',

label='Do Not Reject H₀\n(95%)')

# Shade the left rejection region

x_left = x[x < -1.96]

y_left = stats.norm.pdf(x_left, 0, 1)

ax.fill_between(x_left, y_left, alpha=0.5, color='red',

label='Rejection Region\n(2.5%)')

# Shade the right rejection region

x_right = x[x > 1.96]

y_right = stats.norm.pdf(x_right, 0, 1)

ax.fill_between(x_right, y_right, alpha=0.5, color='red')

# Draw vertical lines at critical values

ax.axvline(-1.96, color='red', linestyle='--', linewidth=3, alpha=0.9)

ax.axvline(1.96, color='red', linestyle='--', linewidth=3, alpha=0.9)

ax.axvline(0, color='darkblue', linestyle='-', linewidth=2, alpha=0.6)

# Add critical value labels

ax.text(-1.96, -0.025, 'Z = -1.96\n(Critical Value)', ha='center', fontsize=11,

fontweight='bold', color='red',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightcoral', alpha=0.95))

ax.text(1.96, -0.025, 'Z = +1.96\n(Critical Value)', ha='center', fontsize=11,

fontweight='bold', color='red',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightcoral', alpha=0.95))

ax.text(0, -0.025, 'μ₀ = 16\n(Hypothesized)', ha='center', fontsize=11,

fontweight='bold', color='darkblue',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightblue', alpha=0.95))

# Add percentage labels

ax.text(-3, 0.015, '2.5%\n(α/2)', ha='center', fontsize=12, fontweight='bold',

color='darkred',

bbox=dict(boxstyle='round,pad=0.4', facecolor='mistyrose', alpha=0.9))

ax.text(3, 0.015, '2.5%\n(α/2)', ha='center', fontsize=12, fontweight='bold',

color='darkred',

bbox=dict(boxstyle='round,pad=0.4', facecolor='mistyrose', alpha=0.9))

ax.text(0, 0.25, '95%\nDo Not Reject H₀: μ = 16', ha='center', fontsize=12,

fontweight='bold', color='darkgreen',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightgreen', alpha=0.9))

# Add arrows showing rejection regions

ax.annotate('Reject H₀', xy=(-2.8, 0.05), xytext=(-3.2, 0.15),

fontsize=11, fontweight='bold', color='red',

arrowprops=dict(arrowstyle='->', lw=2, color='red'),

bbox=dict(boxstyle='round,pad=0.4', facecolor='mistyrose', alpha=0.9))

ax.annotate('Reject H₀', xy=(2.8, 0.05), xytext=(3.2, 0.15),

fontsize=11, fontweight='bold', color='red',

arrowprops=dict(arrowstyle='->', lw=2, color='red'),

bbox=dict(boxstyle='round,pad=0.4', facecolor='mistyrose', alpha=0.9))

# Formatting

ax.set_title('Two-Tailed Hypothesis Test: Critical Values and Rejection Regions\n' +

'H₀: μ = 16 vs Hₐ: μ ≠ 16 (α = 0.05)',

fontsize=13, fontweight='bold', pad=15)

ax.set_xlabel('Z-value (Standard Deviations from Hypothesized Mean)',

fontsize=11, fontweight='bold')

ax.set_ylabel('Probability Density', fontsize=11, fontweight='bold')

ax.set_xlim(-4, 4)

ax.set_ylim(-0.05, 0.45)

ax.grid(True, alpha=0.3, axis='x')

ax.legend(loc='upper left', fontsize=10, framealpha=0.95)

# Add explanation box

explanation = (

"INTERPRETATION:\n"

"• If sample Z falls between ±1.96:\n"

" Do NOT reject H₀ (difference is\n"

" statistically insignificant)\n\n"

"• If sample Z < -1.96 or Z > +1.96:\n"

" REJECT H₀ (difference is\n"

" statistically significant)\n\n"

"• Only 5% of samples produce\n"

" Z-values in rejection regions\n"

" when H₀ is true"

)

ax.text(0.98, 0.97, explanation, transform=ax.transAxes,

fontsize=9, verticalalignment='top', horizontalalignment='right',

bbox=dict(boxstyle='round,pad=0.7', facecolor='lightyellow',

edgecolor='orange', linewidth=2, alpha=0.95))

# Add significance level note

ax.text(0.02, 0.97, 'Significance Level:\nα = 0.05 (5%)\n\nTotal rejection\nregion = 5%',

transform=ax.transAxes, fontsize=9, fontweight='bold',

verticalalignment='top',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lavender', alpha=0.9))

plt.tight_layout()

plt.show()These Z-values of ±1.96 are critical values that determine the rejection regions.

To find them:

- Divide the 95% confidence level by 2

- In the Z-table, the area of 0.95/2 = 0.4750 corresponds to a Z-value of 1.96

- The remaining 5% is distributed between the two tails, with 2.5% in each rejection region

This 5% is the significance level, or alpha value (α) of the test.

9.4.3 The Logic Behind Rejection Regions

In Figure 8.1, if the bottler’s hypothesis is correct and μ = 16 ounces, it’s unlikely (only a 5% chance) that any given sample would produce a Z-value falling in either rejection region.

Therefore, if a Z-value greater than 1.96 or less than -1.96 occurs, it’s unlikely the distribution is centered at μ = 16, and the null hypothesis should be rejected.

9.5 8.4 Formulating the Decision Rule

These critical Z-values of ±1.96 allow us to establish a decision rule stating whether to reject the null hypothesis or not.

Do not reject the null hypothesis if the Z-value is between ±1.96.

Reject the null hypothesis if the Z-value is less than -1.96 or greater than +1.96.

The underlying logic, based simply on probabilities, should be clear:

- If the null hypothesis is true, it’s unlikely we could obtain a Z-value greater than 1.96 or less than -1.96

- Only 5% of all samples in the sampling distribution could produce such extreme Z-values

- Therefore, if such an extreme Z-value occurs, it’s unlikely that μ = 16, and we should reject the null hypothesis

9.6 8.5 Error Probability: Type I and Type II Errors

When testing a hypothesis, we can make two types of errors.

9.6.1 Type I Error: Rejecting a True Hypothesis

A Type I error is rejecting a null hypothesis that is actually true.

In Figure 8.1, if the bottler’s hypothesis is true and μ = 16, there’s still a 5% chance that a sample mean could fall in one of the rejection regions, causing us to incorrectly reject the null hypothesis:

- 2.5% of all sample means in the sampling distribution produce a Z-value > 1.96 (right-tail rejection region)

- 2.5% produce a Z-value < -1.96 (left-tail rejection region)

This 5% is the significance level, or alpha value (α), and represents the probability of a Type I error.

Type I Error: Rejecting a true hypothesis.

Probability of Type I Error = α (the significance level at which the hypothesis is tested)

9.6.2 Type II Error: Failing to Reject a False Hypothesis

A Type II error is not rejecting a null hypothesis that is actually false.

If the null hypothesis H₀: μ = 16 is incorrect, but the test fails to detect this, we’ve committed a Type II error.

Key distinction:

- Probability of Type I error = α (the selected significance level)

- Probability of Type II error = β (beta), which is not easily determined

- Important: We cannot assume that α + β = 1

9.6.3 Selecting the Significance Level

Commonly selected significance levels (α values) for hypothesis testing are:

- 10% (α = 0.10): More lenient, higher risk of Type I error

- 5% (α = 0.05): Standard in many applications

- 1% (α = 0.01): More stringent, lower risk of Type I error

However, there’s nothing magical about these values. You could test a hypothesis at a 4% significance level if you chose to.

The selection of α depends on which type of error—Type I or Type II—you most want to avoid.

If rejecting a true hypothesis (Type I error) is more serious: - Select a low α value (1% or 5%) - This minimizes the probability of Type I error - Example: Medical drug testing where false positives are dangerous

If failing to reject a false hypothesis (Type II error) is more serious: - Select a higher α value (10%) - This reduces the probability of Type II error - Example: Quality control where missing defects is costly

9.6.4 Business Application: The Bottler’s Decision

Suppose the soft drink bottler rejects the null hypothesis H₀: μ = 16 and shuts down the bottling process to adjust the fill level. However, if the mean is actually still 16 ounces, the bottler has committed a Type I error.

If this is more costly than a Type II error (allowing the process to continue when μ ≠ 16), the bottler should select a low α value, such as 1%, for the test.

9.7 8.6 Two-Tailed Test for μ: Complete Example

Now you’re prepared to conduct a complete hypothesis test. There are four steps involved:

Step 1: State the hypotheses

Step 2: Calculate the test statistic Z based on sample results

Step 3: Determine the decision rule based on critical Z-values

Step 4: Interpretation and conclusions

9.7.1 Example 8.1: Soft Drink Bottler Quality Control

Scenario: A soft drink bottler wants to test the hypothesis that the population mean is 16 ounces, selecting a significance level of 5%.

Because the hypothesis is μ = 16, the null and alternative hypotheses are:

H_0: \mu = 16 \quad H_A: \mu \neq 16

To test the hypothesis, we calculate the test statistic Z and compare it with the critical Z-values.

Test Statistic Formulas:

When σ is known:

Z = \frac{\bar{X} - \mu_H}{\frac{\sigma}{\sqrt{n}}}

When σ is unknown (most common):

Z = \frac{\bar{X} - \mu_H}{\frac{s}{\sqrt{n}}}

Where: - X̄ = sample mean - μ_H = hypothesized value of the population mean (under H₀) - σ/√n or s/√n = standard error of the sampling distribution

Sample Data: - Sample size: n = 50 bottles - Sample mean: X̄ = 16.357 ounces - Sample standard deviation: s = 0.866 ounces

Calculation:

Z = \frac{16.357 - 16}{\frac{0.866}{\sqrt{50}}} = \frac{0.357}{0.122} = 2.91

Step 3: Determine Decision Rule

With α = 0.05 (5% significance level) divided between two tails:

- Each tail contains 2.5% of the distribution

- The remaining 95% divided by 2 gives area = 0.4750

- From Z-table: Area of 0.4750 corresponds to Z = ±1.96

Do not reject H₀ if -1.96 ≤ Z ≤ 1.96

Reject H₀ if Z < -1.96 or Z > 1.96

Figure 8.2: Hypothesis Test for Average Bottle Contents

Reject H₀ Do Not Reject H₀ Reject H₀

(2.5%) (95%) (2.5%)

↓ ↓

|-------|-------------------|------------------|-------|

-∞ -1.96 0 1.96 +∞

↑

Sample Z = 2.91Note that rejection regions exist in both tails. If Z > 1.96 or Z < -1.96, we reject the null hypothesis. This is called a two-tailed test.

Step 4: Interpretation and Conclusion

The test statistic from the sample (Z = 2.91) exceeds the critical value (1.96) and falls in the right-tail rejection region.

Conclusion: “The null hypothesis is rejected at the 5% significance level.”

Business Interpretation: It’s simply not likely that a population with a mean of 16 could yield a sample producing Z > 1.96. There’s only a 2.5% probability that Z could exceed 1.96 (and only 2.5% probability that Z < -1.96) if μ actually equals 16.

Therefore, the null hypothesis H₀: μ = 16 should be rejected at the 5% significance level. The bottling process requires adjustment.

Does this mean μ is definitely not 16? Not with absolute certainty. If μ = 16, 2.5% of all samples of size n = 50 would still generate a Z > 1.96. The population mean could be 16, in which case we’ve committed a Type I error by rejecting H₀. But this is unlikely because P(Z > 1.96 | μ = 16) is only 2.5%.

9.8 8.7 Hypothesis Testing with Technology: Python Output

Python Output for Soft Drink Bottler Example:

Z-Test

Test of mu = 16.000 vs mu not = 16.000

The assumed sigma = 0.866

Variable N Mean StDev SE Mean Z P-Value

Ounces 50 16.357 0.866 0.122 2.91 0.0037The Python output provides: - Sample size (N = 50) - Sample mean (16.357) - Sample standard deviation (0.866) - Standard error (0.122) - Test statistic (Z = 2.91) - P-value (0.0037) ← We’ll discuss this in the next section

9.9 Section Exercises

1. What are the four steps in conducting a hypothesis test?

2. Explain in your own words why a decision rule must be used to determine whether the null hypothesis should be rejected. What role does probability play in this decision?

3. What is meant by an “insignificant difference” between the hypothesized population mean and the sample mean?

4. Why is the null hypothesis never “accepted” as true?

5. What role do critical Z-values play in the testing process? How are they determined? Include a graph in your response.

6. What is the “significance level” in a test? How does it influence the critical Z-values? Include a graph in your response.

7. Differentiate between Type I and Type II errors. Give an example of each.

8. Using a graph, clearly illustrate how the probability of a Type I error equals the significance level (α value) of a test.

9. If a Type II error is considered more serious in a certain situation, would you select a high or low α value? Explain.

10. Purchasing Manager Computer Costs: As purchasing manager for a large insurance company, you must decide whether to upgrade office computers. You’ve been told the average cost of computers is $2,100. A sample of 64 retailers reveals an average price of $2,251 with a standard deviation of $812. At a 5% significance level, does it appear your information is correct?

11. New Car Purchase: Seduced by commercials, you’ve been persuaded to buy a new car. You think you’ll have to pay $25,000 for the car you want. As a careful shopper, you check prices of 40 possible vehicles and find an average cost of $27,312 with a standard deviation of $8,012. Wishing to avoid a Type II error, you test the hypothesis that the average price is $25,000 at a 10% significance level. What is your conclusion?

12. Employee Commute Time: Due to excessive time spent commuting to work, the office where you work in downtown Chicago is considering staggering employee work hours. The manager believes employees spend an average of 50 minutes commuting to work. Seventy employees average 47.2 minutes with a standard deviation of 18.9 minutes. Set α = 1% and test the hypothesis.

End of Stage 1

This completes the first stage covering: - Introduction to hypothesis testing - Null and alternative hypotheses formulation - Critical values and rejection regions - Type I and Type II errors - Two-tailed tests for μ with large samples - Decision rule formulation

Coming in Stage 2: - One-tailed tests (left-tail and right-tail) - p-value calculation and interpretation - Small sample tests using t-distribution # Hypothesis Testing - Stage 2

9.10 8.8 One-Tailed Tests for μ: When Direction Matters

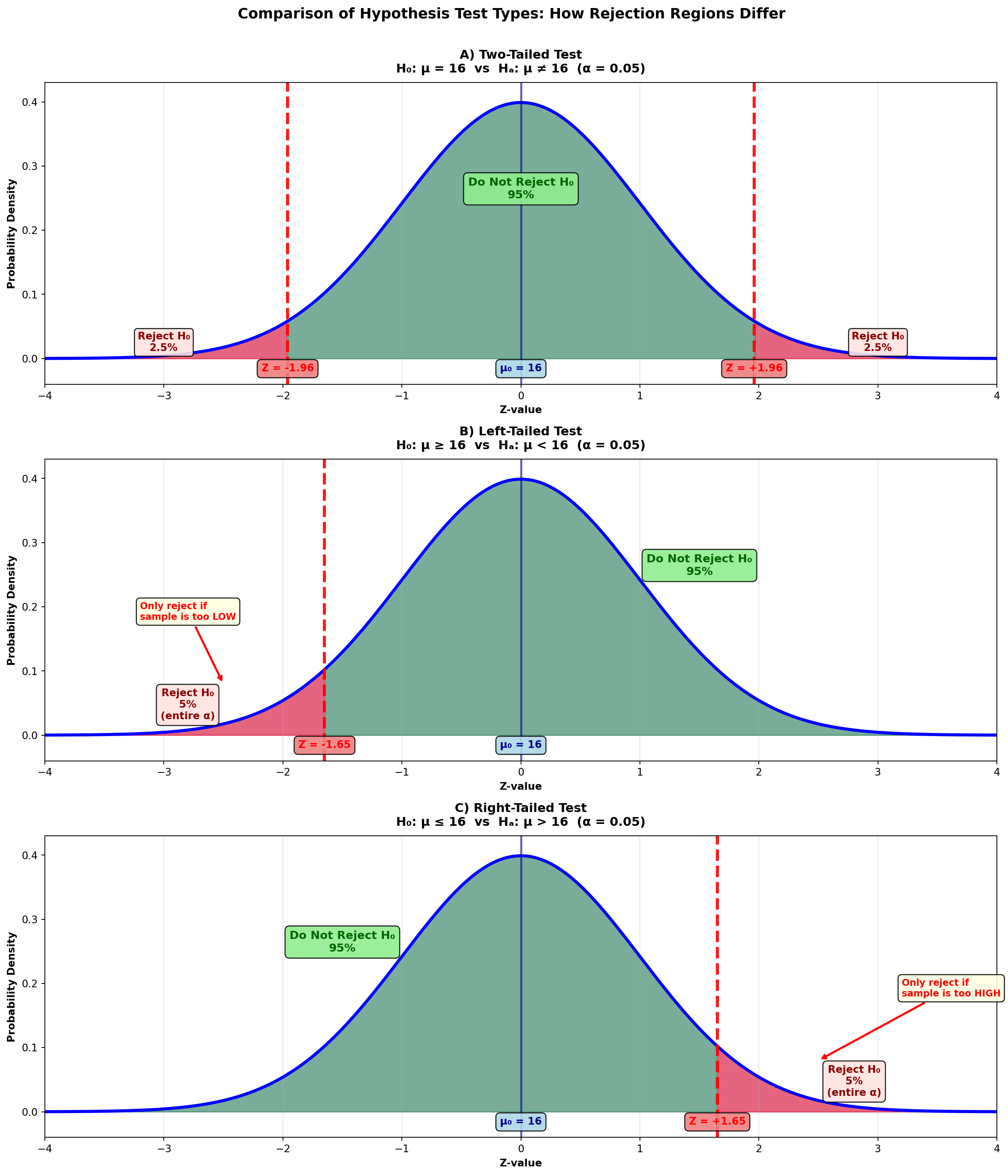

The tests performed in the previous section were two-tailed tests because there were rejection regions in both tails. The hypothesis test for the bottler’s claim that μ = 16 would be rejected if the sample statistic was either too high or too low. Either way, it appears that μ isn’t 16, and the null hypothesis is rejected.

However, there are many occasions when we’re only interested in one extreme or the other:

A seafood restaurant in Kansas City doesn’t care how fast lobsters arrive from the East Coast—they’re only concerned if shipment takes too long.

A retail store will only be alarmed if revenues fall to levels that are too low. High sales aren’t a problem.

In each of these cases, concern focuses on one extreme or the other, and a one-tailed test is performed.

9.10.1 Comparison of Two-Tailed and One-Tailed Tests

Figure 8.3: Comparison of Two-Tailed and One-Tailed Tests

Code

import matplotlib.pyplot as plt

import numpy as np

from scipy import stats

fig, axes = plt.subplots(3, 1, figsize=(14, 16))

# Generate standard normal distribution

x = np.linspace(-4, 4, 1000)

y = stats.norm.pdf(x, 0, 1)

# ========== Panel A: Two-Tailed Test ==========

ax = axes[0]

ax.plot(x, y, 'b-', linewidth=3)

ax.fill_between(x, y, alpha=0.2, color='blue')

# Shade non-rejection region (middle 95%)

x_middle = x[(x >= -1.96) & (x <= 1.96)]

y_middle = stats.norm.pdf(x_middle, 0, 1)

ax.fill_between(x_middle, y_middle, alpha=0.4, color='green')

# Shade rejection regions

x_left = x[x < -1.96]

y_left = stats.norm.pdf(x_left, 0, 1)

ax.fill_between(x_left, y_left, alpha=0.5, color='red', label='Rejection Region')

x_right = x[x > 1.96]

y_right = stats.norm.pdf(x_right, 0, 1)

ax.fill_between(x_right, y_right, alpha=0.5, color='red')

# Critical value lines

ax.axvline(-1.96, color='red', linestyle='--', linewidth=3, alpha=0.9)

ax.axvline(1.96, color='red', linestyle='--', linewidth=3, alpha=0.9)

ax.axvline(0, color='darkblue', linestyle='-', linewidth=2, alpha=0.6)

# Labels

ax.text(-1.96, -0.02, 'Z = -1.96', ha='center', fontsize=10, fontweight='bold', color='red',

bbox=dict(boxstyle='round,pad=0.4', facecolor='lightcoral', alpha=0.9))

ax.text(1.96, -0.02, 'Z = +1.96', ha='center', fontsize=10, fontweight='bold', color='red',

bbox=dict(boxstyle='round,pad=0.4', facecolor='lightcoral', alpha=0.9))

ax.text(0, -0.02, 'μ₀ = 16', ha='center', fontsize=10, fontweight='bold', color='darkblue',

bbox=dict(boxstyle='round,pad=0.4', facecolor='lightblue', alpha=0.9))

ax.text(-3, 0.012, 'Reject H₀\n2.5%', ha='center', fontsize=10, fontweight='bold', color='darkred',

bbox=dict(boxstyle='round,pad=0.3', facecolor='mistyrose', alpha=0.9))

ax.text(3, 0.012, 'Reject H₀\n2.5%', ha='center', fontsize=10, fontweight='bold', color='darkred',

bbox=dict(boxstyle='round,pad=0.3', facecolor='mistyrose', alpha=0.9))

ax.text(0, 0.25, 'Do Not Reject H₀\n95%', ha='center', fontsize=11, fontweight='bold', color='darkgreen',

bbox=dict(boxstyle='round,pad=0.4', facecolor='lightgreen', alpha=0.9))

# Title and formatting

ax.set_title('A) Two-Tailed Test\nH₀: μ = 16 vs Hₐ: μ ≠ 16 (α = 0.05)',

fontsize=12, fontweight='bold', pad=10)

ax.set_xlabel('Z-value', fontsize=10, fontweight='bold')

ax.set_ylabel('Probability Density', fontsize=10, fontweight='bold')

ax.set_xlim(-4, 4)

ax.set_ylim(-0.04, 0.43)

ax.grid(True, alpha=0.3, axis='x')

# ========== Panel B: Left-Tailed Test ==========

ax = axes[1]

ax.plot(x, y, 'b-', linewidth=3)

ax.fill_between(x, y, alpha=0.2, color='blue')

# Shade non-rejection region (right 95%)

x_right_accept = x[x >= -1.65]

y_right_accept = stats.norm.pdf(x_right_accept, 0, 1)

ax.fill_between(x_right_accept, y_right_accept, alpha=0.4, color='green')

# Shade rejection region (left 5%)

x_left_reject = x[x < -1.65]

y_left_reject = stats.norm.pdf(x_left_reject, 0, 1)

ax.fill_between(x_left_reject, y_left_reject, alpha=0.5, color='red', label='Rejection Region')

# Critical value line

ax.axvline(-1.65, color='red', linestyle='--', linewidth=3, alpha=0.9)

ax.axvline(0, color='darkblue', linestyle='-', linewidth=2, alpha=0.6)

# Labels

ax.text(-1.65, -0.02, 'Z = -1.65', ha='center', fontsize=10, fontweight='bold', color='red',

bbox=dict(boxstyle='round,pad=0.4', facecolor='lightcoral', alpha=0.9))

ax.text(0, -0.02, 'μ₀ = 16', ha='center', fontsize=10, fontweight='bold', color='darkblue',

bbox=dict(boxstyle='round,pad=0.4', facecolor='lightblue', alpha=0.9))

ax.text(-2.8, 0.025, 'Reject H₀\n5%\n(entire α)', ha='center', fontsize=10, fontweight='bold', color='darkred',

bbox=dict(boxstyle='round,pad=0.4', facecolor='mistyrose', alpha=0.9))

ax.text(1.5, 0.25, 'Do Not Reject H₀\n95%', ha='center', fontsize=11, fontweight='bold', color='darkgreen',

bbox=dict(boxstyle='round,pad=0.4', facecolor='lightgreen', alpha=0.9))

# Arrow annotation

ax.annotate('Only reject if\nsample is too LOW', xy=(-2.5, 0.08), xytext=(-3.2, 0.18),

fontsize=9, fontweight='bold', color='red',

arrowprops=dict(arrowstyle='->', lw=2, color='red'),

bbox=dict(boxstyle='round,pad=0.4', facecolor='lightyellow', alpha=0.9))

# Title and formatting

ax.set_title('B) Left-Tailed Test\nH₀: μ ≥ 16 vs Hₐ: μ < 16 (α = 0.05)',

fontsize=12, fontweight='bold', pad=10)

ax.set_xlabel('Z-value', fontsize=10, fontweight='bold')

ax.set_ylabel('Probability Density', fontsize=10, fontweight='bold')

ax.set_xlim(-4, 4)

ax.set_ylim(-0.04, 0.43)

ax.grid(True, alpha=0.3, axis='x')

# ========== Panel C: Right-Tailed Test ==========

ax = axes[2]

ax.plot(x, y, 'b-', linewidth=3)

ax.fill_between(x, y, alpha=0.2, color='blue')

# Shade non-rejection region (left 95%)

x_left_accept = x[x <= 1.65]

y_left_accept = stats.norm.pdf(x_left_accept, 0, 1)

ax.fill_between(x_left_accept, y_left_accept, alpha=0.4, color='green')

# Shade rejection region (right 5%)

x_right_reject = x[x > 1.65]

y_right_reject = stats.norm.pdf(x_right_reject, 0, 1)

ax.fill_between(x_right_reject, y_right_reject, alpha=0.5, color='red', label='Rejection Region')

# Critical value line

ax.axvline(1.65, color='red', linestyle='--', linewidth=3, alpha=0.9)

ax.axvline(0, color='darkblue', linestyle='-', linewidth=2, alpha=0.6)

# Labels

ax.text(1.65, -0.02, 'Z = +1.65', ha='center', fontsize=10, fontweight='bold', color='red',

bbox=dict(boxstyle='round,pad=0.4', facecolor='lightcoral', alpha=0.9))

ax.text(0, -0.02, 'μ₀ = 16', ha='center', fontsize=10, fontweight='bold', color='darkblue',

bbox=dict(boxstyle='round,pad=0.4', facecolor='lightblue', alpha=0.9))

ax.text(2.8, 0.025, 'Reject H₀\n5%\n(entire α)', ha='center', fontsize=10, fontweight='bold', color='darkred',

bbox=dict(boxstyle='round,pad=0.4', facecolor='mistyrose', alpha=0.9))

ax.text(-1.5, 0.25, 'Do Not Reject H₀\n95%', ha='center', fontsize=11, fontweight='bold', color='darkgreen',

bbox=dict(boxstyle='round,pad=0.4', facecolor='lightgreen', alpha=0.9))

# Arrow annotation

ax.annotate('Only reject if\nsample is too HIGH', xy=(2.5, 0.08), xytext=(3.2, 0.18),

fontsize=9, fontweight='bold', color='red',

arrowprops=dict(arrowstyle='->', lw=2, color='red'),

bbox=dict(boxstyle='round,pad=0.4', facecolor='lightyellow', alpha=0.9))

# Title and formatting

ax.set_title('C) Right-Tailed Test\nH₀: μ ≤ 16 vs Hₐ: μ > 16 (α = 0.05)',

fontsize=12, fontweight='bold', pad=10)

ax.set_xlabel('Z-value', fontsize=10, fontweight='bold')

ax.set_ylabel('Probability Density', fontsize=10, fontweight='bold')

ax.set_xlim(-4, 4)

ax.set_ylim(-0.04, 0.43)

ax.grid(True, alpha=0.3, axis='x')

# Overall title

fig.suptitle('Comparison of Hypothesis Test Types: How Rejection Regions Differ',

fontsize=14, fontweight='bold', y=0.995)

plt.tight_layout(rect=[0, 0, 1, 0.99])

plt.show()Two-Tailed Test: - Tests if μ differs from hypothesized value in either direction - Rejection regions in both tails (α/2 in each) - Critical values: ±1.96 for α = 0.05 - Use when: Testing for “equal to” or “different from”

Left-Tailed Test: - Tests if μ is less than hypothesized value - Rejection region only in left tail (entire α) - Critical value: -1.65 for α = 0.05 - Use when: Testing “at least” claims (H₀: μ ≥ value)

Right-Tailed Test: - Tests if μ is greater than hypothesized value - Rejection region only in right tail (entire α) - Critical value: +1.65 for α = 0.05 - Use when: Testing “at most” claims (H₀: μ ≤ value)

9.10.2 Left-Tailed Test: Testing “At Least” Claims

Instead of hypothesizing that the average content level is exactly 16 ounces, suppose the bottler claims the average content level is “at least 16 ounces.” The null hypothesis becomes H₀: μ ≥ 16 (16 or more).

The alternative hypothesis states the opposite, and the complete set of hypotheses is:

H_0: \mu \geq 16 \quad H_A: \mu < 16

Figure 8.3 (B) shows that the hypothesis H₀: μ ≥ 16 is not rejected if the sample statistic is above 16. The hypothesis H₀: μ ≥ 16 allows for values above 16. Sample means such as 16.3, 16.5, or even 17 and 18 support, not refute, the claim that μ ≥ 16.

Only values significantly below 16 can cause rejection of the null hypothesis. Therefore, a rejection region appears only in the left tail, and the entire α value is placed in this single rejection region.

9.10.3 Right-Tailed Test: Testing “At Most” Claims

Suppose the bottler claims that the average content level is “at most 16.” The null hypothesis is now written as H₀: μ ≤ 16. The hypotheses are:

H_0: \mu \leq 16 \quad H_A: \mu > 16

Figure 8.3 (C) shows that now low sample statistics don’t lead to rejection. The null hypothesis H₀: μ ≤ 16 allows for values below 16. Sample means such as 15, or even 14, support the claim that μ ≤ 16.

Only values significantly above 16 cause rejection. Therefore, there’s a rejection region only in the right tail, and the entire α value is placed in this single rejection region.

Note that in both the left-tailed and right-tailed tests, the equality sign is placed in the null hypothesis. This is because the null hypothesis is tested at a specific α value (such as 5%), and the equality sign gives the null hypothesis a specific value (such as 16) to test against.

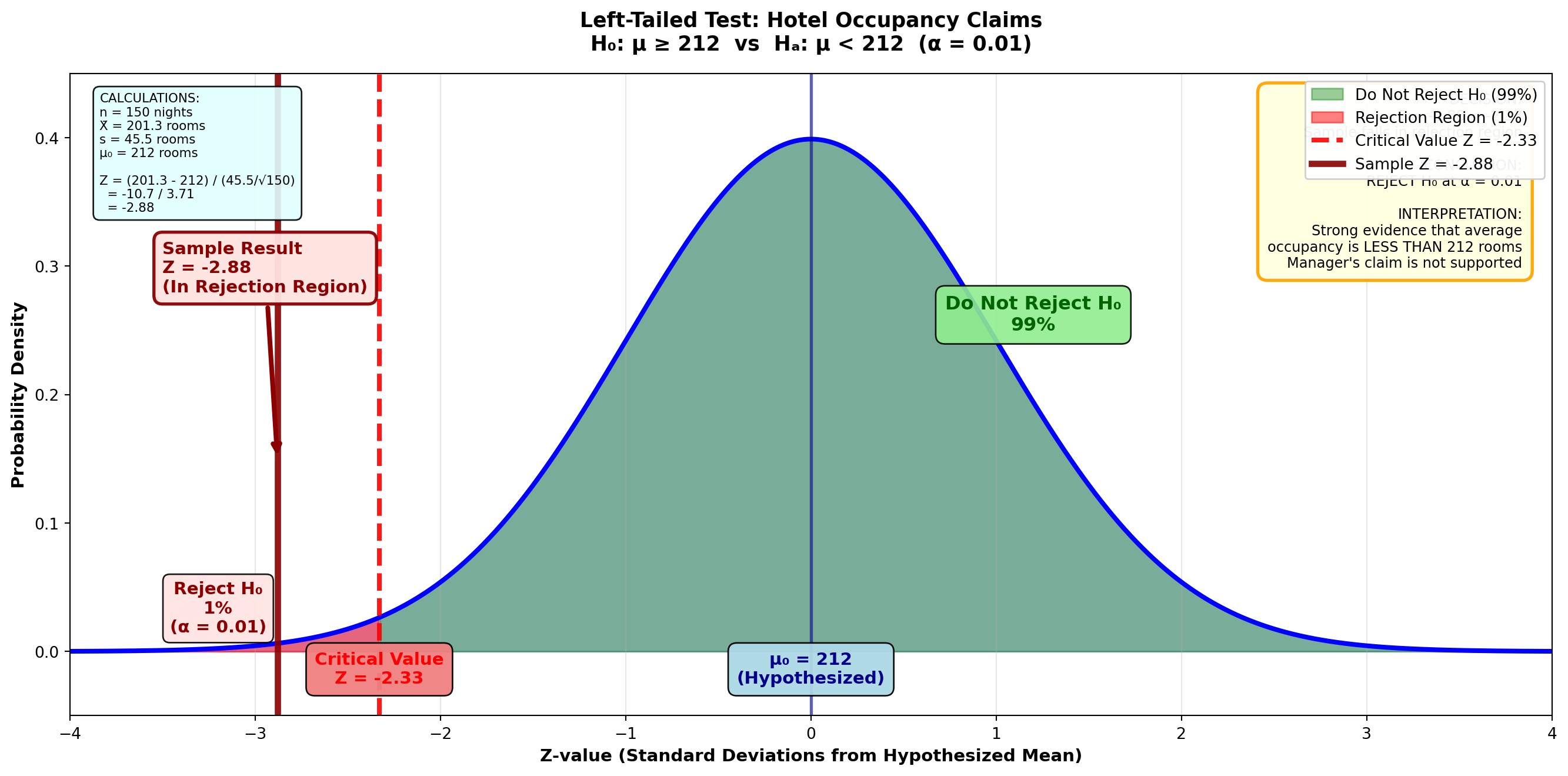

9.10.4 Example 8.2: Hotel Occupancy Claims (Left-Tailed Test)

Scenario: Embassy Suites Hotel Manager’s Report

At a corporate briefing, the manager of the Embassy Suites hotel in Atlanta reported that the average number of rooms rented per night is at least 212 (μ ≥ 212). One corporate official believes this figure may be somewhat overestimated.

Sample Data: - Sample size: n = 150 nights - Sample mean: X̄ = 201.3 rooms - Sample standard deviation: s = 45.5 rooms

If these results suggest the manager has “inflated” his report, he will be severely reprimanded. At a 1% significance level, what is the manager’s fate?

Solution:

Step 1: State the Hypotheses

The manager’s claim that μ ≥ 212 contains the equality sign and therefore serves as the null hypothesis:

H_0: \mu \geq 212 \quad H_A: \mu < 212

Values above 212 will not cause rejection of the null hypothesis, which clearly allows for values exceeding 212. Only values significantly below 212 will lead to rejection of μ ≥ 212.

Therefore, this is a left-tailed test.

Step 2: Calculate the Test Statistic

Z = \frac{201.3 - 212}{\frac{45.5}{\sqrt{150}}} = \frac{-10.7}{3.71} = -2.88

Step 3: Determine the Decision Rule

With a 1% significance level (α = 0.01) in a one-tailed test:

- The entire 1% is placed in the left tail

- Area between mean and critical value = 0.5000 - 0.0100 = 0.4900

- From Z-table: Area of 0.4900 corresponds to Z = -2.33

Figure: Left-Tailed Test for Hotel Occupancy (α = 0.01)

Code

import matplotlib.pyplot as plt

import numpy as np

from scipy import stats

fig, ax = plt.subplots(figsize=(14, 7))

# Generate standard normal distribution

x = np.linspace(-4, 4, 1000)

y = stats.norm.pdf(x, 0, 1)

# Plot the distribution

ax.plot(x, y, 'b-', linewidth=3)

ax.fill_between(x, y, alpha=0.2, color='blue')

# Shade non-rejection region (right 99%)

x_right = x[x >= -2.33]

y_right = stats.norm.pdf(x_right, 0, 1)

ax.fill_between(x_right, y_right, alpha=0.4, color='green',

label='Do Not Reject H₀ (99%)')

# Shade rejection region (left 1%)

x_left = x[x < -2.33]

y_left = stats.norm.pdf(x_left, 0, 1)

ax.fill_between(x_left, y_left, alpha=0.5, color='red',

label='Rejection Region (1%)')

# Draw critical value line

ax.axvline(-2.33, color='red', linestyle='--', linewidth=3, alpha=0.9,

label='Critical Value Z = -2.33')

ax.axvline(0, color='darkblue', linestyle='-', linewidth=2, alpha=0.6)

# Draw sample test statistic

ax.axvline(-2.88, color='darkred', linestyle='-', linewidth=4, alpha=0.9,

label='Sample Z = -2.88')

# Add critical value labels

ax.text(-2.33, -0.025, 'Critical Value\nZ = -2.33', ha='center', fontsize=11,

fontweight='bold', color='red',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightcoral', alpha=0.95))

ax.text(0, -0.025, 'μ₀ = 212\n(Hypothesized)', ha='center', fontsize=11,

fontweight='bold', color='darkblue',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightblue', alpha=0.95))

# Sample statistic label with arrow

ax.annotate('Sample Result\nZ = -2.88\n(In Rejection Region)',

xy=(-2.88, 0.15), xytext=(-3.5, 0.28),

fontsize=11, fontweight='bold', color='darkred',

arrowprops=dict(arrowstyle='->', lw=3, color='darkred'),

bbox=dict(boxstyle='round,pad=0.5', facecolor='mistyrose',

edgecolor='darkred', linewidth=2, alpha=0.95))

# Add percentage labels

ax.text(-3.2, 0.015, 'Reject H₀\n1%\n(α = 0.01)', ha='center', fontsize=11,

fontweight='bold', color='darkred',

bbox=dict(boxstyle='round,pad=0.4', facecolor='mistyrose', alpha=0.9))

ax.text(1.2, 0.25, 'Do Not Reject H₀\n99%', ha='center', fontsize=12,

fontweight='bold', color='darkgreen',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightgreen', alpha=0.9))

# Formatting

ax.set_title('Left-Tailed Test: Hotel Occupancy Claims\n' +

'H₀: μ ≥ 212 vs Hₐ: μ < 212 (α = 0.01)',

fontsize=13, fontweight='bold', pad=15)

ax.set_xlabel('Z-value (Standard Deviations from Hypothesized Mean)',

fontsize=11, fontweight='bold')

ax.set_ylabel('Probability Density', fontsize=11, fontweight='bold')

ax.set_xlim(-4, 4)

ax.set_ylim(-0.05, 0.45)

ax.grid(True, alpha=0.3, axis='x')

ax.legend(loc='upper right', fontsize=10, framealpha=0.95)

# Add decision box

decision_text = (

"DECISION:\n"

"Z = -2.88 < -2.33\n"

"Sample falls in rejection region\n\n"

"CONCLUSION:\n"

"REJECT H₀ at α = 0.01\n\n"

"INTERPRETATION:\n"

"Strong evidence that average\n"

"occupancy is LESS THAN 212 rooms\n"

"Manager's claim is not supported"

)

ax.text(0.98, 0.97, decision_text, transform=ax.transAxes,

fontsize=9, verticalalignment='top', horizontalalignment='right',

bbox=dict(boxstyle='round,pad=0.7', facecolor='lightyellow',

edgecolor='orange', linewidth=2, alpha=0.95))

# Add calculation box

calc_text = (

"CALCULATIONS:\n"

"n = 150 nights\n"

"X̄ = 201.3 rooms\n"

"s = 45.5 rooms\n"

"μ₀ = 212 rooms\n\n"

"Z = (201.3 - 212) / (45.5/√150)\n"

" = -10.7 / 3.71\n"

" = -2.88"

)

ax.text(0.02, 0.97, calc_text, transform=ax.transAxes,

fontsize=8, verticalalignment='top',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightcyan', alpha=0.9))

plt.tight_layout()

plt.show()Do not reject H₀ if Z ≥ -2.33

Reject H₀ if Z < -2.33

Step 4: Conclusion

The Z-value of -2.88 is clearly in the rejection region (Z < -2.33). The null hypothesis H₀: μ ≥ 212 is not confirmed.

Interpretation: It appears the manager has overstated his occupancy rate and will apparently receive a reprimand from the home office.

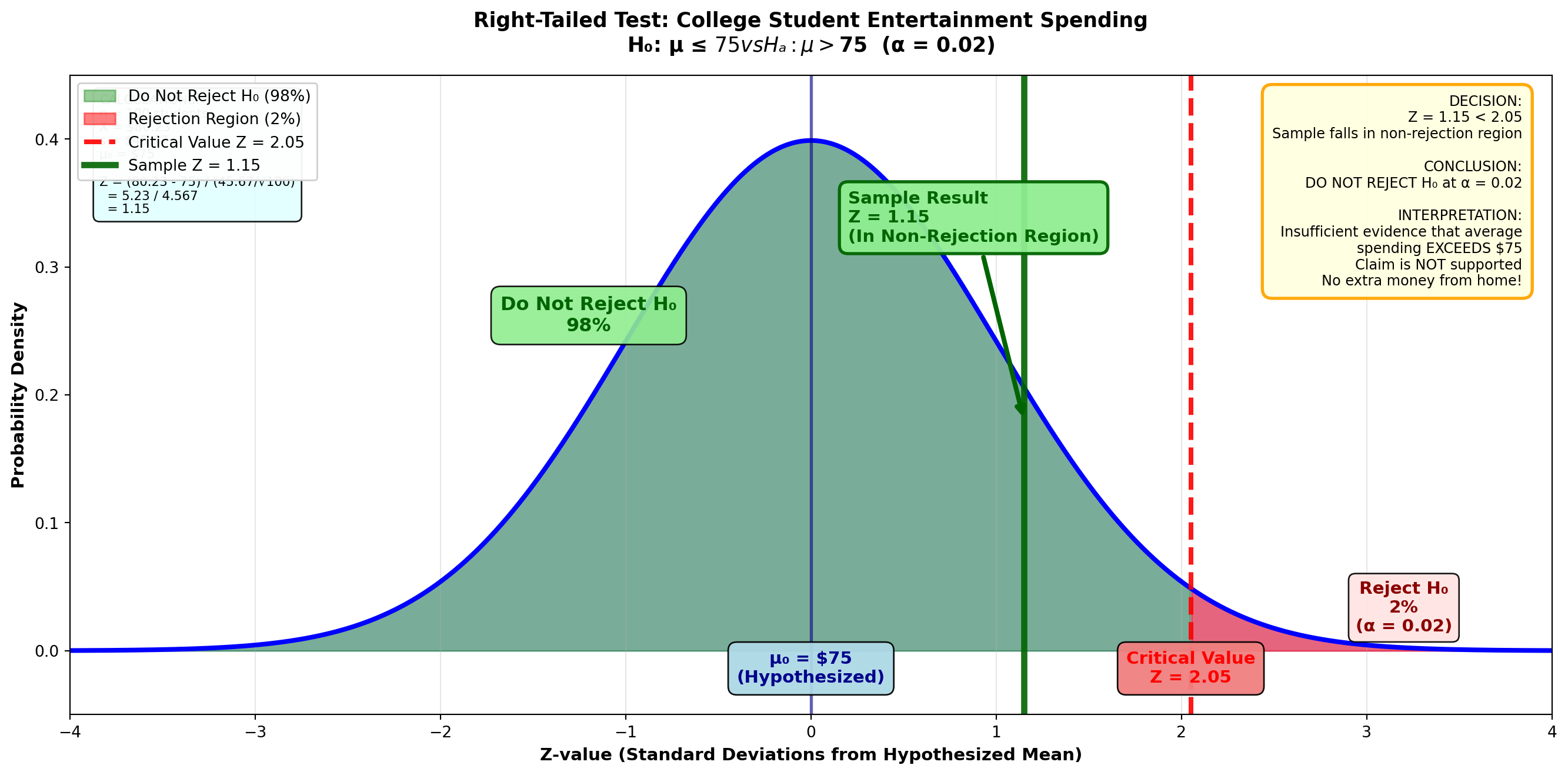

9.10.5 Example 8.3: College Student Entertainment Spending (Right-Tailed Test)

Scenario: Requesting Additional Financial Support

A survey conducted by the National Collegiate Students’ Association showed that college students nationwide spend on average more than $75 monthly on entertainment. If you can find evidence to confirm this claim, you could use it to request additional monetary help from home.

Sample Data: - Sample size: n = 100 students - Sample mean: X̄ = $80.23 - Sample standard deviation: s = $45.67

At a 2% significance level, is there justification for the request?

Solution:

Step 1: State the Hypotheses

The claim that the mean is more than $75 serves as the alternative hypothesis because μ > 75 doesn’t contain an equality sign. The hypotheses are:

H_0: \mu \leq 75 \quad H_A: \mu > 75

A right-tailed test is required because lower values wouldn’t lead to rejection of the null hypothesis.

Step 2: Calculate the Test Statistic

Z = \frac{80.23 - 75}{\frac{45.67}{\sqrt{100}}} = \frac{5.23}{4.567} = 1.15

Step 3: Determine the Decision Rule

With α = 0.02 in a right-tailed test:

- The entire 2% is placed in the right tail

- Area between mean and critical value = 0.5000 - 0.0200 = 0.4800

- From Z-table: Area of 0.4800 corresponds to Z = 2.05

Figure: Right-Tailed Test for Student Spending (α = 0.02)

Code

import matplotlib.pyplot as plt

import numpy as np

from scipy import stats

fig, ax = plt.subplots(figsize=(14, 7))

# Generate standard normal distribution

x = np.linspace(-4, 4, 1000)

y = stats.norm.pdf(x, 0, 1)

# Plot the distribution

ax.plot(x, y, 'b-', linewidth=3)

ax.fill_between(x, y, alpha=0.2, color='blue')

# Shade non-rejection region (left 98%)

x_left = x[x <= 2.05]

y_left = stats.norm.pdf(x_left, 0, 1)

ax.fill_between(x_left, y_left, alpha=0.4, color='green',

label='Do Not Reject H₀ (98%)')

# Shade rejection region (right 2%)

x_right = x[x > 2.05]

y_right = stats.norm.pdf(x_right, 0, 1)

ax.fill_between(x_right, y_right, alpha=0.5, color='red',

label='Rejection Region (2%)')

# Draw critical value line

ax.axvline(2.05, color='red', linestyle='--', linewidth=3, alpha=0.9,

label='Critical Value Z = 2.05')

ax.axvline(0, color='darkblue', linestyle='-', linewidth=2, alpha=0.6)

# Draw sample test statistic

ax.axvline(1.15, color='darkgreen', linestyle='-', linewidth=4, alpha=0.9,

label='Sample Z = 1.15')

# Add critical value labels

ax.text(2.05, -0.025, 'Critical Value\nZ = 2.05', ha='center', fontsize=11,

fontweight='bold', color='red',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightcoral', alpha=0.95))

ax.text(0, -0.025, 'μ₀ = $75\n(Hypothesized)', ha='center', fontsize=11,

fontweight='bold', color='darkblue',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightblue', alpha=0.95))

# Sample statistic label with arrow

ax.annotate('Sample Result\nZ = 1.15\n(In Non-Rejection Region)',

xy=(1.15, 0.18), xytext=(0.2, 0.32),

fontsize=11, fontweight='bold', color='darkgreen',

arrowprops=dict(arrowstyle='->', lw=3, color='darkgreen'),

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightgreen',

edgecolor='darkgreen', linewidth=2, alpha=0.95))

# Add percentage labels

ax.text(3.2, 0.015, 'Reject H₀\n2%\n(α = 0.02)', ha='center', fontsize=11,

fontweight='bold', color='darkred',

bbox=dict(boxstyle='round,pad=0.4', facecolor='mistyrose', alpha=0.9))

ax.text(-1.2, 0.25, 'Do Not Reject H₀\n98%', ha='center', fontsize=12,

fontweight='bold', color='darkgreen',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightgreen', alpha=0.9))

# Formatting

ax.set_title('Right-Tailed Test: College Student Entertainment Spending\n' +

'H₀: μ ≤ $75 vs Hₐ: μ > $75 (α = 0.02)',

fontsize=13, fontweight='bold', pad=15)

ax.set_xlabel('Z-value (Standard Deviations from Hypothesized Mean)',

fontsize=11, fontweight='bold')

ax.set_ylabel('Probability Density', fontsize=11, fontweight='bold')

ax.set_xlim(-4, 4)

ax.set_ylim(-0.05, 0.45)

ax.grid(True, alpha=0.3, axis='x')

ax.legend(loc='upper left', fontsize=10, framealpha=0.95)

# Add decision box

decision_text = (

"DECISION:\n"

"Z = 1.15 < 2.05\n"

"Sample falls in non-rejection region\n\n"

"CONCLUSION:\n"

"DO NOT REJECT H₀ at α = 0.02\n\n"

"INTERPRETATION:\n"

"Insufficient evidence that average\n"

"spending EXCEEDS $75\n"

"Claim is NOT supported\n"

"No extra money from home!"

)

ax.text(0.98, 0.97, decision_text, transform=ax.transAxes,

fontsize=9, verticalalignment='top', horizontalalignment='right',

bbox=dict(boxstyle='round,pad=0.7', facecolor='lightyellow',

edgecolor='orange', linewidth=2, alpha=0.95))

# Add calculation box

calc_text = (

"CALCULATIONS:\n"

"n = 100 students\n"

"X̄ = $80.23\n"

"s = $45.67\n"

"μ₀ = $75\n\n"

"Z = (80.23 - 75) / (45.67/√100)\n"

" = 5.23 / 4.567\n"

" = 1.15"

)

ax.text(0.02, 0.97, calc_text, transform=ax.transAxes,

fontsize=8, verticalalignment='top',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightcyan', alpha=0.9))

plt.tight_layout()

plt.show()Do not reject H₀ if Z ≤ 2.05

Reject H₀ if Z > 2.05

Step 4: Conclusion

Because Z = 1.15 < 2.05, we do not reject the null hypothesis H₀: μ ≤ 75. It appears the average entertainment cost is not greater than $75.

Interpretation: Despite your decadent lifestyle, the typical student doesn’t spend more than $75. You’ll have to find another way to obtain more money from home!

9.11 Section Exercises: One-Tailed Tests

17. Explain in your own words the difference between one-tailed and two-tailed hypothesis tests. Give examples of both.

18. Why does the equality sign always go in the null hypothesis?

19. Explain clearly why a null hypothesis of H₀: μ ≤ 10 requires a right-tailed test, while a null hypothesis of H₀: μ ≥ 10 requires a left-tailed test.

20. Raynor & Sons Advertising Effect: During recent months, Raynor & Sons has advertised its electrical supply business extensively. Mr. Raynor hopes the result has been to increase average weekly sales above the $7,880 the company experienced in the past. A sample of 36 weeks yields a mean of $8,023 with a standard deviation of $1,733. At a 1% significance level, does it appear the advertising has produced an effect?

21. Hardee’s Menu Decision: In fall 1997, Hardee’s, the fast-food giant, was acquired by a California company that plans to eliminate the fried chicken line from the menu. The claim was that recent revenues had fallen below the average of $4,500 experienced in the past. Does this seem like a wise decision if 144 observations reveal a mean of $4,477 and a standard deviation of $1,228? Management is willing to accept a 2% probability of committing a Type I error.

22. Sporting Goods Marketing to Younger Consumers: According to The Wall Street Journal (May 12, 1997), many sporting goods companies are trying to market their products to younger consumers. The article suggested the average age of consumers had fallen below the 34.4-year age group that characterized the early 1990s. If a sample of 1,000 customers reports a mean of 33.2 years and a standard deviation of 9.4, what can be concluded at a 4% significance level?

23. Forbes Exclusive Retreats: The July 1997 issue of Forbes magazine reported on exclusive “hideaways” in upstate New York and its surroundings used by wealthy executives to escape the tedium of their stressful daily lives. The cost is very reasonable, the article reported. You can hire weekend lodging for less than $3,500. Is this “reasonable” figure confirmed at a 5% significance level if a sample of 60 resorts have an average cost of $3,200 and s = $950?

24. Hyundai Sales Decline: In the early 1990s, Hyundai, the Korean automobile manufacturer, suffered a severe sales drop below its monthly peak of 25,000 units in May 1988. Hyundai Motor America (summer 1997) reported that sales had fallen to less than 10,000 units. During a 48-month period beginning in January 1990, average sales were 9,204 units. Assume a standard deviation of 944 units. At a 1% significance level, does it appear the average number of units has fallen below the 10,000 mark?

25. Baskin-Robbins Store Openings: Baskin-Robbins, the ice cream franchise, claims the number of stores opening has increased above the weekly average of 10.4 experienced during lean times (The Wall Street Journal, February 1997). Is there evidence to support this claim if 50 weeks show a mean of 12.5 and a standard deviation of 0.66 stores? Management is willing to accept a 4% probability of rejecting the null hypothesis if it’s true.

26. Atlantic Mutual Insurance Coverage: A recent advertisement claims the amount of property and marine insurance underwritten by Atlantic Mutual is at least $325,500 per month. Forty months report a mean of $330,000 and s = $112,300. At a 5% significance level, does Atlantic Mutual’s claim appear valid?

9.12 8.9 p-Values: Use and Interpretation

As we’ve seen, to test a hypothesis we calculate a Z-value and compare it with a critical Z-value based on the selected significance level. While the p-value of a test can serve as an alternative method for testing hypotheses, it’s actually much more than that.

In this section, we develop a strict definition of the p-value and the role it can play in hypothesis testing. You should thoroughly understand why the p-value is defined the way it is, how to calculate it for both two-tailed and one-tailed tests, and how to interpret it.

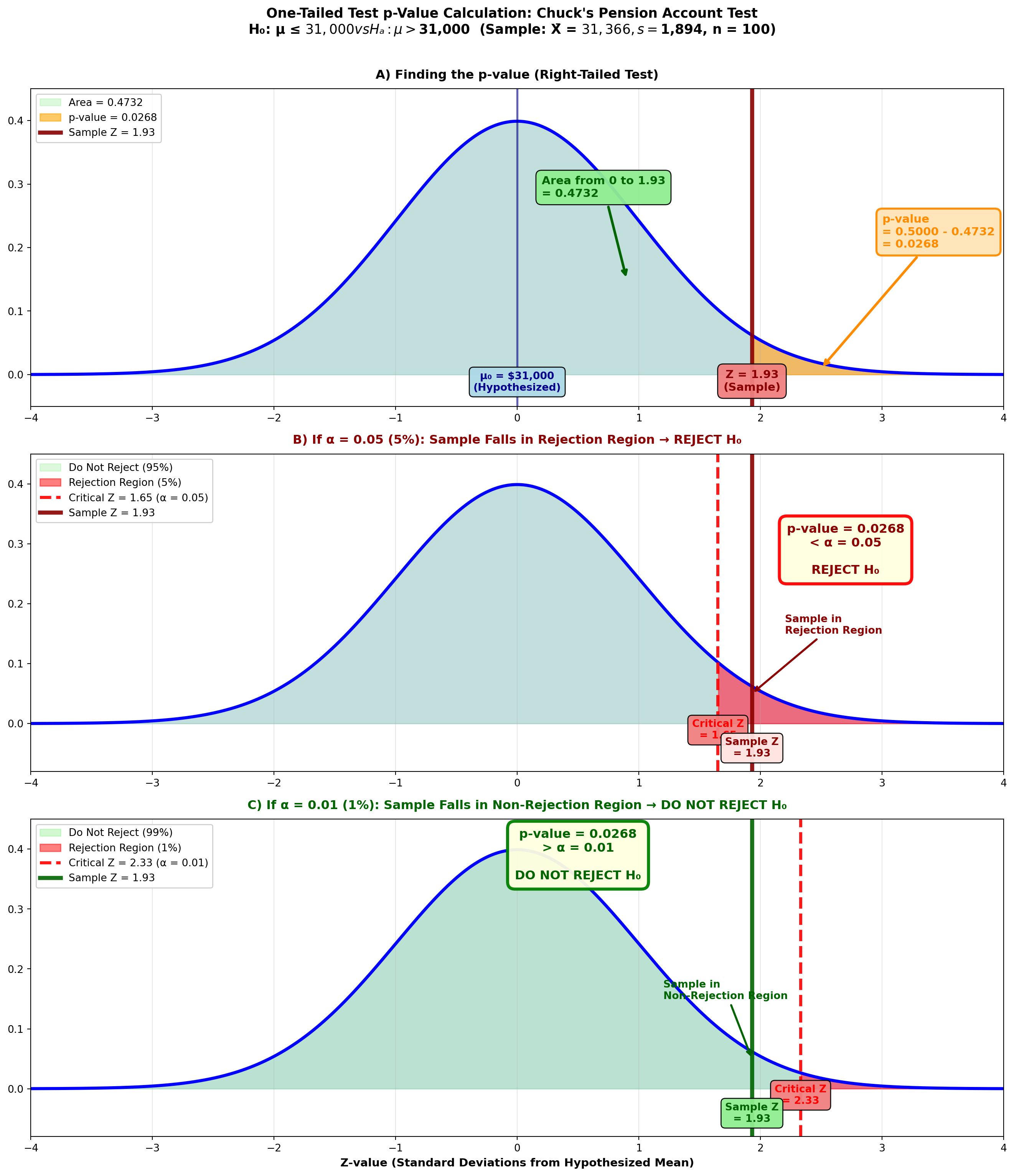

9.12.1 p-Value for a One-Tailed Test

Let’s begin with a one-tailed test. Suppose Chuck Cash is the chief of personnel. From a brief analysis of employee records, Chuck believes employees average more than $31,000 in their pension accounts (μ > 31,000). Sampling 100 employees, Chuck finds a mean of $31,366 with s = $1,894.

Chuck wants to calculate the p-value related to this right-tailed test.

Step 1: State the Hypotheses

H_0: \mu \leq 31,000 \quad H_A: \mu > 31,000

Step 2: Calculate the Test Statistic

Z = \frac{31,366 - 31,000}{\frac{1,894}{\sqrt{100}}} = \frac{366}{189.4} = 1.93

Step 3: Calculate the p-Value

The p-value is the area in the tail beyond the sample test statistic of Z = 1.93.

Figure 8.4: One-Tailed Test p-Value Calculation

Code

import matplotlib.pyplot as plt

import numpy as np

from scipy import stats

fig, axes = plt.subplots(3, 1, figsize=(14, 16))

# Panel A: Finding the p-value

ax = axes[0]

x = np.linspace(-4, 4, 1000)

y = stats.norm.pdf(x, 0, 1)

ax.plot(x, y, 'b-', linewidth=3)

ax.fill_between(x, y, alpha=0.15, color='blue')

# Shade area to left of 1.93

x_left = x[x <= 1.93]

y_left = stats.norm.pdf(x_left, 0, 1)

ax.fill_between(x_left, y_left, alpha=0.3, color='lightgreen',

label='Area = 0.4732')

# Shade p-value region (right tail)

x_right = x[x > 1.93]

y_right = stats.norm.pdf(x_right, 0, 1)

ax.fill_between(x_right, y_right, alpha=0.6, color='orange',

label='p-value = 0.0268')

ax.axvline(1.93, color='darkred', linestyle='-', linewidth=4, alpha=0.9,

label='Sample Z = 1.93')

ax.axvline(0, color='darkblue', linestyle='-', linewidth=2, alpha=0.6)

ax.text(1.93, -0.025, 'Z = 1.93\n(Sample)', ha='center', fontsize=11,

fontweight='bold', color='darkred',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightcoral', alpha=0.95))

ax.text(0, -0.025, 'μ₀ = $31,000\n(Hypothesized)', ha='center', fontsize=10,

fontweight='bold', color='darkblue',

bbox=dict(boxstyle='round,pad=0.4', facecolor='lightblue', alpha=0.95))

ax.annotate('Area from 0 to 1.93\n= 0.4732',

xy=(0.9, 0.15), xytext=(0.2, 0.28),

fontsize=11, fontweight='bold', color='darkgreen',

arrowprops=dict(arrowstyle='->', lw=2.5, color='darkgreen'),

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightgreen', alpha=0.95))

ax.annotate('p-value\n= 0.5000 - 0.4732\n= 0.0268',

xy=(2.5, 0.01), xytext=(3.0, 0.2),

fontsize=11, fontweight='bold', color='darkorange',

arrowprops=dict(arrowstyle='->', lw=2.5, color='darkorange'),

bbox=dict(boxstyle='round,pad=0.5', facecolor='moccasin',

edgecolor='darkorange', linewidth=2, alpha=0.95))

ax.set_title('A) Finding the p-value (Right-Tailed Test)',

fontsize=12, fontweight='bold', pad=10)

ax.set_xlim(-4, 4)

ax.set_ylim(-0.05, 0.45)

ax.grid(True, alpha=0.3, axis='x')

ax.legend(loc='upper left', fontsize=10, framealpha=0.95)

# Panel B: α = 0.05 (Reject H₀)

ax = axes[1]

ax.plot(x, y, 'b-', linewidth=3)

ax.fill_between(x, y, alpha=0.15, color='blue')

# Shade non-rejection region

x_left_b = x[x <= 1.65]

y_left_b = stats.norm.pdf(x_left_b, 0, 1)

ax.fill_between(x_left_b, y_left_b, alpha=0.3, color='lightgreen',

label='Do Not Reject (95%)')

# Shade rejection region

x_right_b = x[x > 1.65]

y_right_b = stats.norm.pdf(x_right_b, 0, 1)

ax.fill_between(x_right_b, y_right_b, alpha=0.5, color='red',

label='Rejection Region (5%)')

ax.axvline(1.65, color='red', linestyle='--', linewidth=3, alpha=0.9,

label='Critical Z = 1.65 (α = 0.05)')

ax.axvline(1.93, color='darkred', linestyle='-', linewidth=4, alpha=0.9,

label='Sample Z = 1.93')

ax.text(1.65, -0.025, 'Critical Z\n= 1.65', ha='center', fontsize=10,

fontweight='bold', color='red',

bbox=dict(boxstyle='round,pad=0.4', facecolor='lightcoral', alpha=0.95))

ax.text(1.93, -0.055, 'Sample Z\n= 1.93', ha='center', fontsize=10,

fontweight='bold', color='darkred',

bbox=dict(boxstyle='round,pad=0.4', facecolor='mistyrose', alpha=0.95))

ax.text(2.7, 0.25, 'p-value = 0.0268\n< α = 0.05\n\nREJECT H₀',

ha='center', fontsize=12, fontweight='bold', color='darkred',

bbox=dict(boxstyle='round,pad=0.6', facecolor='lightyellow',

edgecolor='red', linewidth=3, alpha=0.95))

ax.annotate('Sample in\nRejection Region',

xy=(1.93, 0.05), xytext=(2.2, 0.15),

fontsize=10, fontweight='bold', color='darkred',

arrowprops=dict(arrowstyle='->', lw=2, color='darkred'))

ax.set_title('B) If α = 0.05 (5%): Sample Falls in Rejection Region → REJECT H₀',

fontsize=12, fontweight='bold', pad=10, color='darkred')

ax.set_xlim(-4, 4)

ax.set_ylim(-0.08, 0.45)

ax.grid(True, alpha=0.3, axis='x')

ax.legend(loc='upper left', fontsize=10, framealpha=0.95)

# Panel C: α = 0.01 (Do Not Reject H₀)

ax = axes[2]

ax.plot(x, y, 'b-', linewidth=3)

ax.fill_between(x, y, alpha=0.15, color='blue')

# Shade non-rejection region

x_left_c = x[x <= 2.33]

y_left_c = stats.norm.pdf(x_left_c, 0, 1)

ax.fill_between(x_left_c, y_left_c, alpha=0.4, color='lightgreen',

label='Do Not Reject (99%)')

# Shade rejection region

x_right_c = x[x > 2.33]

y_right_c = stats.norm.pdf(x_right_c, 0, 1)

ax.fill_between(x_right_c, y_right_c, alpha=0.5, color='red',

label='Rejection Region (1%)')

ax.axvline(2.33, color='red', linestyle='--', linewidth=3, alpha=0.9,

label='Critical Z = 2.33 (α = 0.01)')

ax.axvline(1.93, color='darkgreen', linestyle='-', linewidth=4, alpha=0.9,

label='Sample Z = 1.93')

ax.text(2.33, -0.025, 'Critical Z\n= 2.33', ha='center', fontsize=10,

fontweight='bold', color='red',

bbox=dict(boxstyle='round,pad=0.4', facecolor='lightcoral', alpha=0.95))

ax.text(1.93, -0.055, 'Sample Z\n= 1.93', ha='center', fontsize=10,

fontweight='bold', color='darkgreen',

bbox=dict(boxstyle='round,pad=0.4', facecolor='lightgreen', alpha=0.95))

ax.text(0.5, 0.35, 'p-value = 0.0268\n> α = 0.01\n\nDO NOT REJECT H₀',

ha='center', fontsize=12, fontweight='bold', color='darkgreen',

bbox=dict(boxstyle='round,pad=0.6', facecolor='lightyellow',

edgecolor='green', linewidth=3, alpha=0.95))

ax.annotate('Sample in\nNon-Rejection Region',

xy=(1.93, 0.05), xytext=(1.2, 0.15),

fontsize=10, fontweight='bold', color='darkgreen',

arrowprops=dict(arrowstyle='->', lw=2, color='darkgreen'))

ax.set_title('C) If α = 0.01 (1%): Sample Falls in Non-Rejection Region → DO NOT REJECT H₀',

fontsize=12, fontweight='bold', pad=10, color='darkgreen')

ax.set_xlabel('Z-value (Standard Deviations from Hypothesized Mean)',

fontsize=11, fontweight='bold')

ax.set_xlim(-4, 4)

ax.set_ylim(-0.08, 0.45)

ax.grid(True, alpha=0.3, axis='x')

ax.legend(loc='upper left', fontsize=10, framealpha=0.95)

plt.suptitle('One-Tailed Test p-Value Calculation: Chuck\'s Pension Account Test\n' +

'H₀: μ ≤ $31,000 vs Hₐ: μ > $31,000 (Sample: X̄ = $31,366, s = $1,894, n = 100)',

fontsize=13, fontweight='bold', y=0.995)

plt.tight_layout(rect=[0, 0, 1, 0.99])

plt.show()From the Z-table: Z = 1.93 gives area = 0.4732

p-value = 0.5000 - 0.4732 = 0.0268 or 2.68%

9.12.2 Interpreting the p-Value

What does this p-value of 2.68% tell Chuck?

The p-value is defined as the lowest significance level (minimum alpha value) at which the null hypothesis can be rejected.

For example, Figure 8.4 (B) shows that if we set α at a value greater than 0.0268, such as 0.05:

- Area of 0.4500 requires critical Z-value of 1.65

- The sample test statistic Z = 1.93 falls in the rejection region

- Therefore, we reject H₀

On the other hand, Figure 8.4 (C) shows that if we select an α value less than 0.0268, such as 0.01:

- Area of 0.4900 specifies critical Z-value of 2.33

- The sample test statistic Z = 1.93 falls in the non-rejection region

- Therefore, we do not reject H₀

Chuck can lower the α value for the test down to 0.0268 without placing the sample test statistic in the non-rejection region. That is, an α value of 0.0268 is the lowest value Chuck can set and still reject the null hypothesis.

The p-value tells you what decision you’ll reach at any selected α value:

If p-value < α → Reject H₀

If p-value ≥ α → Do not reject H₀

9.12.3 p-Value with Statistical Software

Python Output for Chuck’s One-Tailed Test:

Z-Test

Test of mu = 31000 vs mu > 31000

The assumed sigma = 1894

Variable N Mean StDev SE Mean Z P-Value

Amount 100 31,366 1,894 189 1.93 0.0268The output provides the Z-value (1.93) and p-value (0.0268) that Chuck calculated.

Many computer programs report only p-values for two-tailed tests. If you’re performing a one-tailed test, divide the reported p-value by 2 to obtain the one-tailed value.

However, if you follow proper instructions (selecting “greater than” or “less than” for the alternative hypothesis), Python will provide the correct one-tailed p-value.

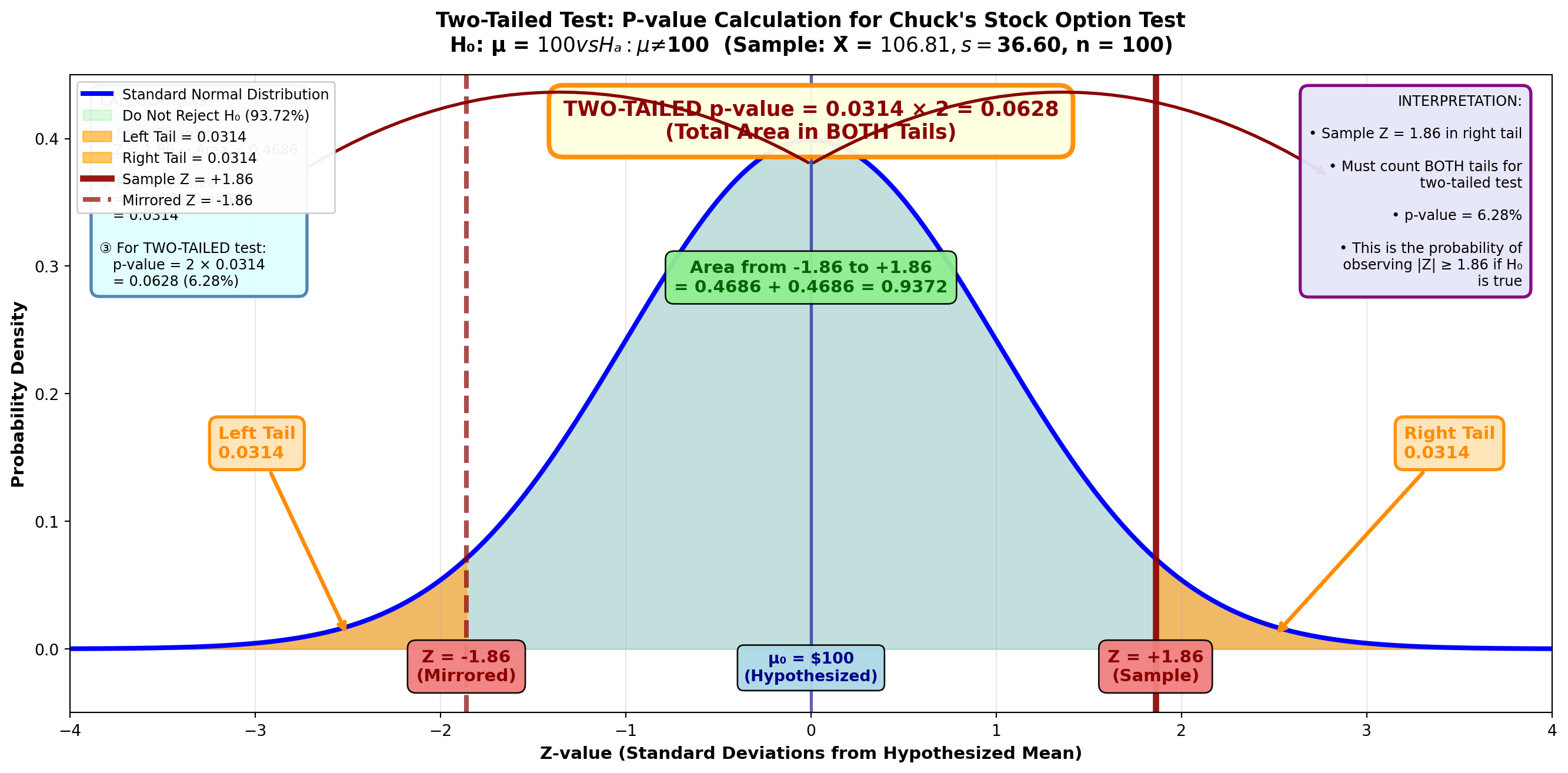

9.12.4 p-Value for a Two-Tailed Test

Calculating the p-value for a two-tailed test is very similar, with a slight twist at the end.

Suppose Chuck also suspects employees invest an average of $100 monthly in the company stock option plan (μ = 100). Sampling 100 employees, Chuck discovers a mean of $106.81 with a standard deviation of $36.60.

He now wants to determine the p-value related to the hypothesis test:

H_0: \mu = 100 \quad H_A: \mu \neq 100

Step 1: Calculate the Test Statistic

Z = \frac{106.81 - 100}{\frac{36.60}{\sqrt{100}}} = \frac{6.81}{3.66} = 1.86

Step 2: Calculate the p-Value

To calculate the p-value, Chuck determines the area in the tail beyond the sample test statistic of Z = 1.86.

Figure 8.5: Two-Tailed Test p-Value Calculation

Code

import matplotlib.pyplot as plt

import numpy as np

from scipy import stats

fig, ax = plt.subplots(figsize=(14, 7))

# Generate standard normal distribution

x = np.linspace(-4, 4, 1000)

y = stats.norm.pdf(x, 0, 1)

# Plot the distribution

ax.plot(x, y, 'b-', linewidth=3, label='Standard Normal Distribution')

ax.fill_between(x, y, alpha=0.15, color='blue')

# Shade the middle (non-rejection) region

x_middle = x[(x > -1.86) & (x < 1.86)]

y_middle = stats.norm.pdf(x_middle, 0, 1)

ax.fill_between(x_middle, y_middle, alpha=0.3, color='lightgreen',

label='Do Not Reject H₀ (93.72%)')

# Shade LEFT tail (p-value region)

x_left = x[x <= -1.86]

y_left = stats.norm.pdf(x_left, 0, 1)

ax.fill_between(x_left, y_left, alpha=0.6, color='orange',

label='Left Tail = 0.0314')

# Shade RIGHT tail (p-value region)

x_right = x[x >= 1.86]

y_right = stats.norm.pdf(x_right, 0, 1)

ax.fill_between(x_right, y_right, alpha=0.6, color='orange',

label='Right Tail = 0.0314')

# Draw sample statistic lines

ax.axvline(1.86, color='darkred', linestyle='-', linewidth=4, alpha=0.9,

label='Sample Z = +1.86')

ax.axvline(-1.86, color='darkred', linestyle='--', linewidth=3, alpha=0.7,

label='Mirrored Z = -1.86')

ax.axvline(0, color='darkblue', linestyle='-', linewidth=2, alpha=0.6)

# Add value labels

ax.text(1.86, -0.025, 'Z = +1.86\n(Sample)', ha='center', fontsize=11,

fontweight='bold', color='darkred',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightcoral', alpha=0.95))

ax.text(-1.86, -0.025, 'Z = -1.86\n(Mirrored)', ha='center', fontsize=11,

fontweight='bold', color='darkred',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightcoral', alpha=0.95))

ax.text(0, -0.025, 'μ₀ = $100\n(Hypothesized)', ha='center', fontsize=10,

fontweight='bold', color='darkblue',

bbox=dict(boxstyle='round,pad=0.4', facecolor='lightblue', alpha=0.95))

# Add tail area labels with arrows

ax.annotate('Left Tail\n0.0314',

xy=(-2.5, 0.01), xytext=(-3.2, 0.15),

fontsize=11, fontweight='bold', color='darkorange',

arrowprops=dict(arrowstyle='->', lw=2.5, color='darkorange'),

bbox=dict(boxstyle='round,pad=0.5', facecolor='moccasin',

edgecolor='darkorange', linewidth=2, alpha=0.95))

ax.annotate('Right Tail\n0.0314',

xy=(2.5, 0.01), xytext=(3.2, 0.15),

fontsize=11, fontweight='bold', color='darkorange',

arrowprops=dict(arrowstyle='->', lw=2.5, color='darkorange'),

bbox=dict(boxstyle='round,pad=0.5', facecolor='moccasin',

edgecolor='darkorange', linewidth=2, alpha=0.95))

# Add middle area label

ax.text(0, 0.28, 'Area from -1.86 to +1.86\n= 0.4686 + 0.4686 = 0.9372',

ha='center', fontsize=11, fontweight='bold', color='darkgreen',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightgreen', alpha=0.95))

# Add total p-value label

ax.text(0, 0.40, 'TWO-TAILED p-value = 0.0314 × 2 = 0.0628\n(Total Area in BOTH Tails)',

ha='center', fontsize=13, fontweight='bold', color='darkred',

bbox=dict(boxstyle='round,pad=0.7', facecolor='lightyellow',

edgecolor='darkorange', linewidth=3, alpha=0.95))

# Add curved arrows showing both tails

ax.annotate('', xy=(-2.8, 0.37), xytext=(0, 0.38),

arrowprops=dict(arrowstyle='->', lw=2, color='darkred',

connectionstyle="arc3,rad=.3"))

ax.annotate('', xy=(2.8, 0.37), xytext=(0, 0.38),

arrowprops=dict(arrowstyle='->', lw=2, color='darkred',

connectionstyle="arc3,rad=-.3"))

# Formatting

ax.set_title('Two-Tailed Test: P-value Calculation for Chuck\'s Stock Option Test\n' +

'H₀: μ = $100 vs Hₐ: μ ≠ $100 (Sample: X̄ = $106.81, s = $36.60, n = 100)',

fontsize=13, fontweight='bold', pad=15)

ax.set_xlabel('Z-value (Standard Deviations from Hypothesized Mean)',

fontsize=11, fontweight='bold')

ax.set_ylabel('Probability Density', fontsize=11, fontweight='bold')

ax.set_xlim(-4, 4)

ax.set_ylim(-0.05, 0.45)

ax.grid(True, alpha=0.3, axis='x')

ax.legend(loc='upper left', fontsize=9, framealpha=0.95)

# Add calculation box

calc_text = (

"CALCULATIONS:\n\n"

"① From Z-table:\n"

" Z = 1.86 → Area = 0.4686\n\n"

"② Right tail area:\n"

" = 0.5000 - 0.4686\n"

" = 0.0314\n\n"

"③ For TWO-TAILED test:\n"

" p-value = 2 × 0.0314\n"

" = 0.0628 (6.28%)"

)

ax.text(0.02, 0.97, calc_text, transform=ax.transAxes,

fontsize=9, verticalalignment='top',

bbox=dict(boxstyle='round,pad=0.6', facecolor='lightcyan',

edgecolor='steelblue', linewidth=2, alpha=0.95))

# Add interpretation box

interp_text = (

"INTERPRETATION:\n\n"

"• Sample Z = 1.86 in right tail\n\n"

"• Must count BOTH tails for\n"

" two-tailed test\n\n"

"• p-value = 6.28%\n\n"

"• This is the probability of\n"

" observing |Z| ≥ 1.86 if H₀\n"

" is true"

)

ax.text(0.98, 0.97, interp_text, transform=ax.transAxes,

fontsize=9, verticalalignment='top', horizontalalignment='right',

bbox=dict(boxstyle='round,pad=0.6', facecolor='lavender',

edgecolor='purple', linewidth=2, alpha=0.95))

plt.tight_layout()

plt.show()From the Z-table: Z = 1.86 gives area = 0.4686

Area in right tail = 0.5000 - 0.4686 = 0.0314

Unlike a one-tailed test, this area must be multiplied by 2 to obtain the p-value.

This is necessary because in a two-tailed test, the α value is divided between the two rejection regions.

p-value = 0.0314 × 2 = 0.0628 or 6.28%

One-tailed test: p-value = area in tail beyond test statistic

Two-tailed test: p-value = 2 × (area in tail beyond test statistic)

Python Output for Chuck’s Two-Tailed Test:

Z-Test

Test of mu = 100.00 vs mu not = 100.00

The assumed sigma = 36.6

Variable N Mean StDev SE Mean Z P-Value

Dollars 100 106.81 36.60 3.66 1.86 0.063Note that the p-value of 0.063 is for a two-tailed hypothesis and doesn’t need to be multiplied by two—Python has already done this.

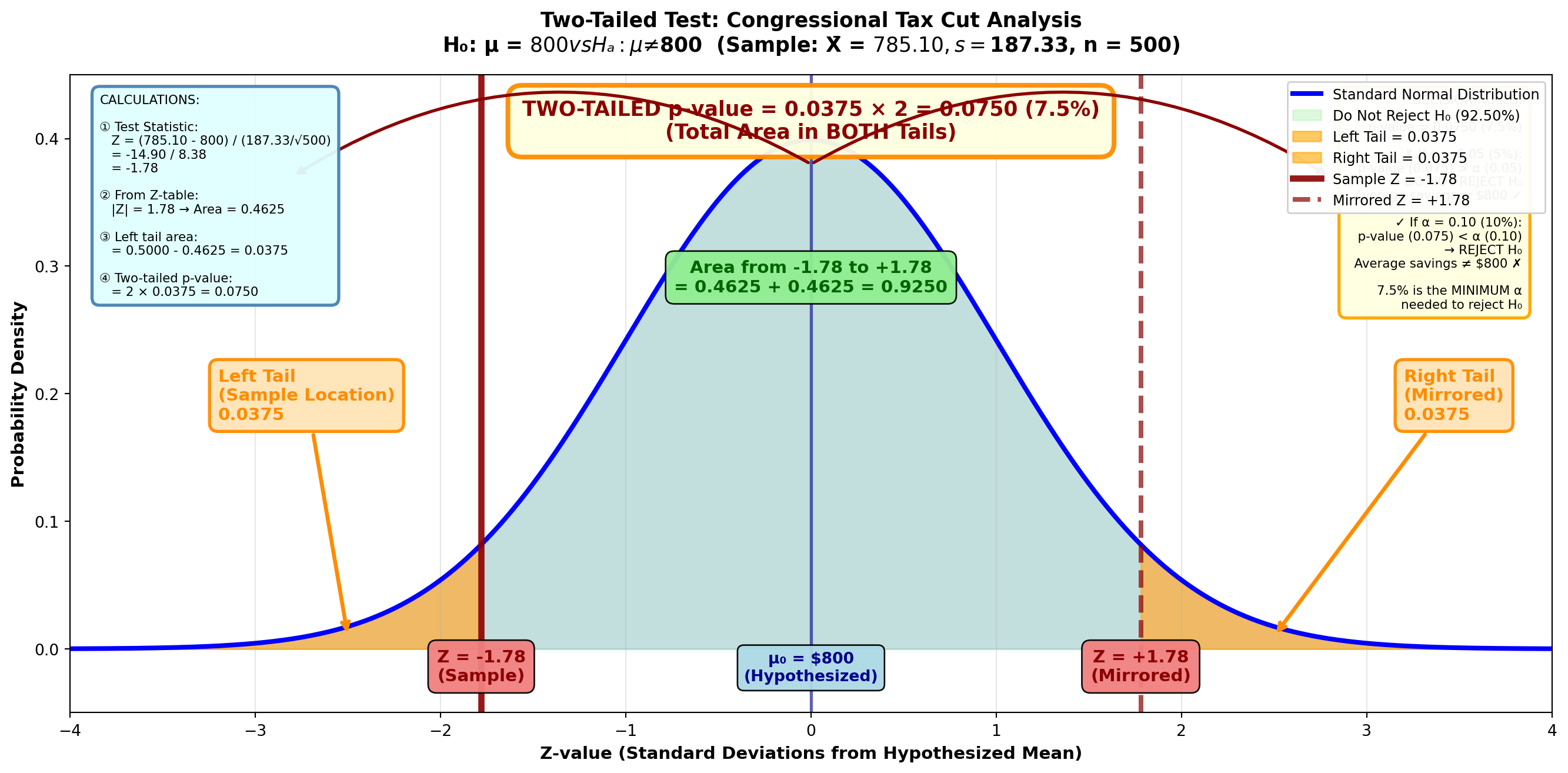

9.12.5 Example 8.4: Congressional Tax Cut Analysis

Scenario: In May 1997, Congress passed a federal budget containing several tax cut provisions. Analysts claimed it would save the average taxpayer $800 per year.

Sample Data: - Sample size: n = 500 taxpayers - Sample mean: X̄ = $785.10 - Sample standard deviation: s = $187.33

Calculate and interpret the p-value.

Solution:

Step 1: State the Hypotheses

H_0: \mu = 800 \quad H_A: \mu \neq 800

Step 2: Calculate the Test Statistic

Z = \frac{785.10 - 800}{\frac{187.33}{\sqrt{500}}} = \frac{-14.90}{8.38} = -1.78

Step 3: Calculate the p-Value

Figure: p-Value for Tax Cut Analysis

Code

import matplotlib.pyplot as plt

import numpy as np

from scipy import stats

fig, ax = plt.subplots(figsize=(14, 7))

# Generate standard normal distribution

x = np.linspace(-4, 4, 1000)

y = stats.norm.pdf(x, 0, 1)

# Plot the distribution

ax.plot(x, y, 'b-', linewidth=3, label='Standard Normal Distribution')

ax.fill_between(x, y, alpha=0.15, color='blue')

# Shade the middle (non-rejection) region

x_middle = x[(x > -1.78) & (x < 1.78)]

y_middle = stats.norm.pdf(x_middle, 0, 1)

ax.fill_between(x_middle, y_middle, alpha=0.3, color='lightgreen',

label='Do Not Reject H₀ (92.50%)')

# Shade LEFT tail (p-value region - where sample is)

x_left = x[x <= -1.78]

y_left = stats.norm.pdf(x_left, 0, 1)

ax.fill_between(x_left, y_left, alpha=0.6, color='orange',

label='Left Tail = 0.0375')

# Shade RIGHT tail (p-value region - mirrored)

x_right = x[x >= 1.78]

y_right = stats.norm.pdf(x_right, 0, 1)

ax.fill_between(x_right, y_right, alpha=0.6, color='orange',

label='Right Tail = 0.0375')

# Draw sample statistic lines

ax.axvline(-1.78, color='darkred', linestyle='-', linewidth=4, alpha=0.9,

label='Sample Z = -1.78')

ax.axvline(1.78, color='darkred', linestyle='--', linewidth=3, alpha=0.7,

label='Mirrored Z = +1.78')

ax.axvline(0, color='darkblue', linestyle='-', linewidth=2, alpha=0.6)

# Add value labels

ax.text(-1.78, -0.025, 'Z = -1.78\n(Sample)', ha='center', fontsize=11,

fontweight='bold', color='darkred',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightcoral', alpha=0.95))

ax.text(1.78, -0.025, 'Z = +1.78\n(Mirrored)', ha='center', fontsize=11,

fontweight='bold', color='darkred',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightcoral', alpha=0.95))

ax.text(0, -0.025, 'μ₀ = $800\n(Hypothesized)', ha='center', fontsize=10,

fontweight='bold', color='darkblue',

bbox=dict(boxstyle='round,pad=0.4', facecolor='lightblue', alpha=0.95))

# Add tail area labels with arrows

ax.annotate('Left Tail\n(Sample Location)\n0.0375',

xy=(-2.5, 0.01), xytext=(-3.2, 0.18),

fontsize=11, fontweight='bold', color='darkorange',

arrowprops=dict(arrowstyle='->', lw=2.5, color='darkorange'),

bbox=dict(boxstyle='round,pad=0.5', facecolor='moccasin',

edgecolor='darkorange', linewidth=2, alpha=0.95))

ax.annotate('Right Tail\n(Mirrored)\n0.0375',

xy=(2.5, 0.01), xytext=(3.2, 0.18),

fontsize=11, fontweight='bold', color='darkorange',

arrowprops=dict(arrowstyle='->', lw=2.5, color='darkorange'),

bbox=dict(boxstyle='round,pad=0.5', facecolor='moccasin',

edgecolor='darkorange', linewidth=2, alpha=0.95))

# Add middle area label

ax.text(0, 0.28, 'Area from -1.78 to +1.78\n= 0.4625 + 0.4625 = 0.9250',

ha='center', fontsize=11, fontweight='bold', color='darkgreen',

bbox=dict(boxstyle='round,pad=0.5', facecolor='lightgreen', alpha=0.95))

# Add total p-value label

ax.text(0, 0.40, 'TWO-TAILED p-value = 0.0375 × 2 = 0.0750 (7.5%)\n(Total Area in BOTH Tails)',

ha='center', fontsize=13, fontweight='bold', color='darkred',

bbox=dict(boxstyle='round,pad=0.7', facecolor='lightyellow',

edgecolor='darkorange', linewidth=3, alpha=0.95))

# Add curved arrows showing both tails

ax.annotate('', xy=(-2.8, 0.37), xytext=(0, 0.38),

arrowprops=dict(arrowstyle='->', lw=2, color='darkred',

connectionstyle="arc3,rad=.3"))

ax.annotate('', xy=(2.8, 0.37), xytext=(0, 0.38),

arrowprops=dict(arrowstyle='->', lw=2, color='darkred',

connectionstyle="arc3,rad=-.3"))

# Formatting

ax.set_title('Two-Tailed Test: Congressional Tax Cut Analysis\n' +

'H₀: μ = $800 vs Hₐ: μ ≠ $800 (Sample: X̄ = $785.10, s = $187.33, n = 500)',

fontsize=13, fontweight='bold', pad=15)

ax.set_xlabel('Z-value (Standard Deviations from Hypothesized Mean)',

fontsize=11, fontweight='bold')

ax.set_ylabel('Probability Density', fontsize=11, fontweight='bold')

ax.set_xlim(-4, 4)

ax.set_ylim(-0.05, 0.45)

ax.grid(True, alpha=0.3, axis='x')

ax.legend(loc='upper right', fontsize=9, framealpha=0.95)

# Add calculation box

calc_text = (

"CALCULATIONS:\n\n"

"① Test Statistic:\n"

" Z = (785.10 - 800) / (187.33/√500)\n"

" = -14.90 / 8.38\n"

" = -1.78\n\n"

"② From Z-table:\n"

" |Z| = 1.78 → Area = 0.4625\n\n"

"③ Left tail area:\n"

" = 0.5000 - 0.4625 = 0.0375\n\n"

"④ Two-tailed p-value:\n"

" = 2 × 0.0375 = 0.0750"

)

ax.text(0.02, 0.97, calc_text, transform=ax.transAxes,

fontsize=8, verticalalignment='top',

bbox=dict(boxstyle='round,pad=0.6', facecolor='lightcyan',

edgecolor='steelblue', linewidth=2, alpha=0.95))

# Add decision box

decision_text = (

"DECISION RULES:\n\n"

"p-value = 0.0750 (7.5%)\n\n"

"✗ If α = 0.05 (5%):\n"

" p-value (0.075) > α (0.05)\n"

" → DO NOT REJECT H₀\n"

" Average savings = $800 ✓\n\n"

"✓ If α = 0.10 (10%):\n"

" p-value (0.075) < α (0.10)\n"

" → REJECT H₀\n"

" Average savings ≠ $800 ✗\n\n"

"7.5% is the MINIMUM α\n"

"needed to reject H₀"

)

ax.text(0.98, 0.97, decision_text, transform=ax.transAxes,

fontsize=8, verticalalignment='top', horizontalalignment='right',

bbox=dict(boxstyle='round,pad=0.6', facecolor='lightyellow',

edgecolor='orange', linewidth=2, alpha=0.95))

plt.tight_layout()

plt.show()From the Z-table: Z = 1.78 gives area = 0.4625

Area in left tail = 0.5000 - 0.4625 = 0.0375

p-value (two-tailed) = 0.0375 × 2 = 0.0750 or 7.5%

Interpretation:

The p-value shows that the lowest α value that can be set and still reject the null hypothesis is 7.5%. This is why we would not reject at an α value of 5%.

If we tested at α = 0.10 (10%), we would reject H₀ because p-value (0.075) < α (0.10).

If we tested at α = 0.05 (5%), we would not reject H₀ because p-value (0.075) > α (0.05).

9.13 Section Exercises: p-Values

27. Define the p-value related to a hypothesis test. Use a graph to explain clearly why the p-value is defined this way and how it can be used to test a hypothesis. Do this for both a one-tailed and a two-tailed test.

28. Congressional Tax Reduction Verification: In summer 1997, Congress passed a federal budget containing several tax reduction provisions. Analysts claimed it would save the average taxpayer $800. A sample of 500 taxpayers showed an average tax reduction of $785.10 with a standard deviation of $277.70. Test the hypothesis at a 5% significance level. Calculate and interpret the p-value.

29. Using the data from the previous problem, compare the α value with the p-value you calculated, and explain why you rejected or did not reject the null hypothesis. Use a graph in your response.

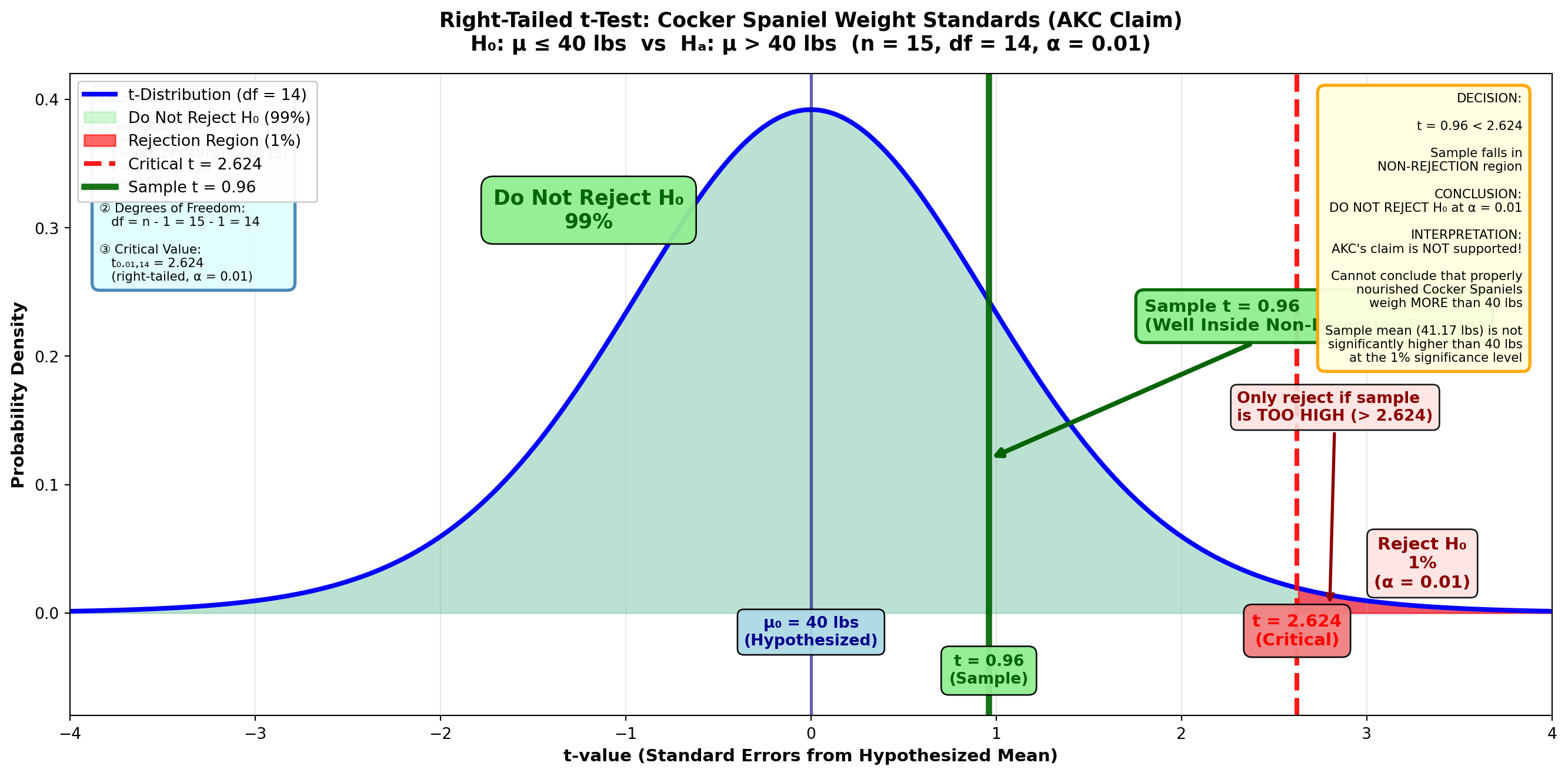

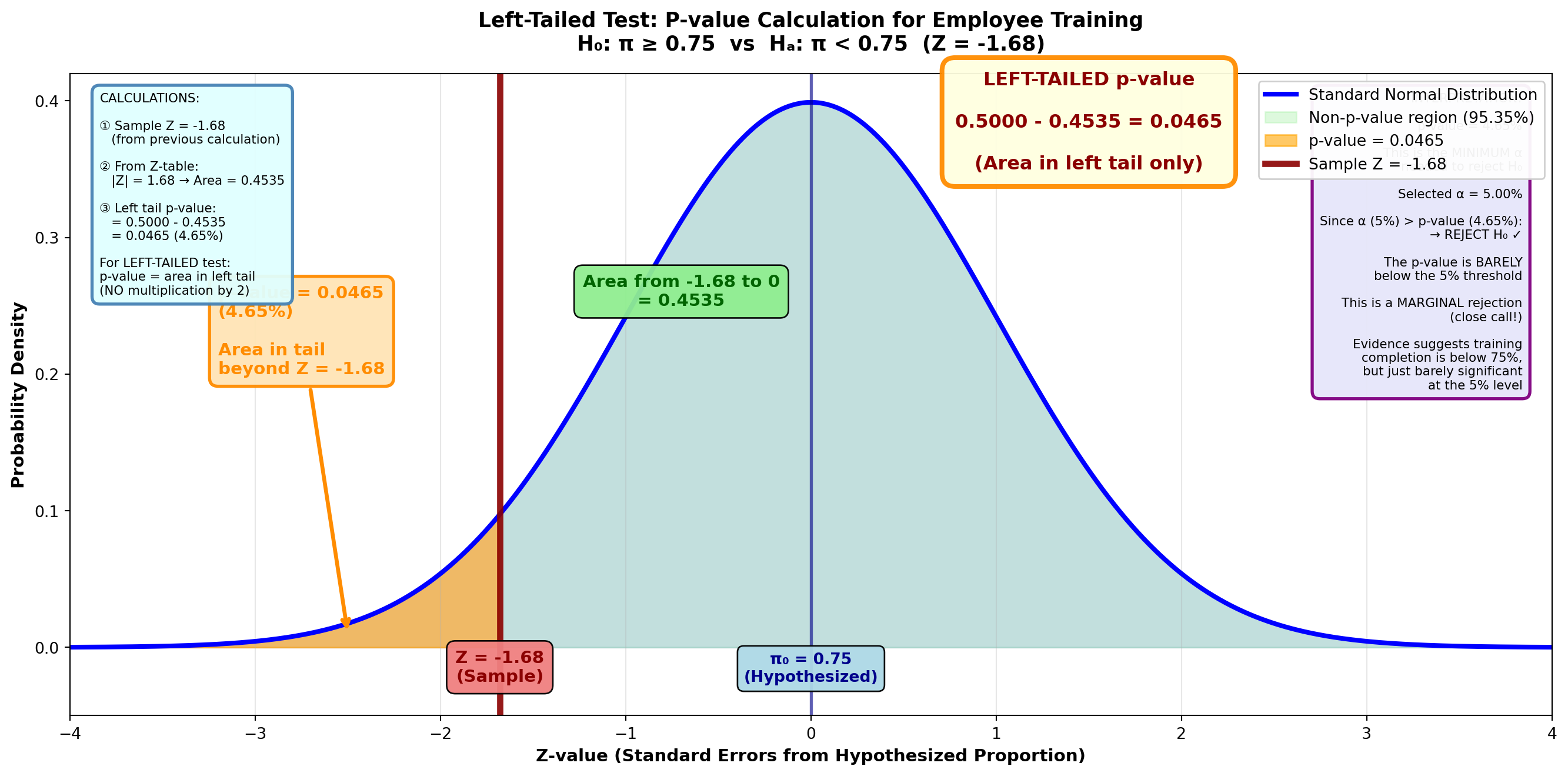

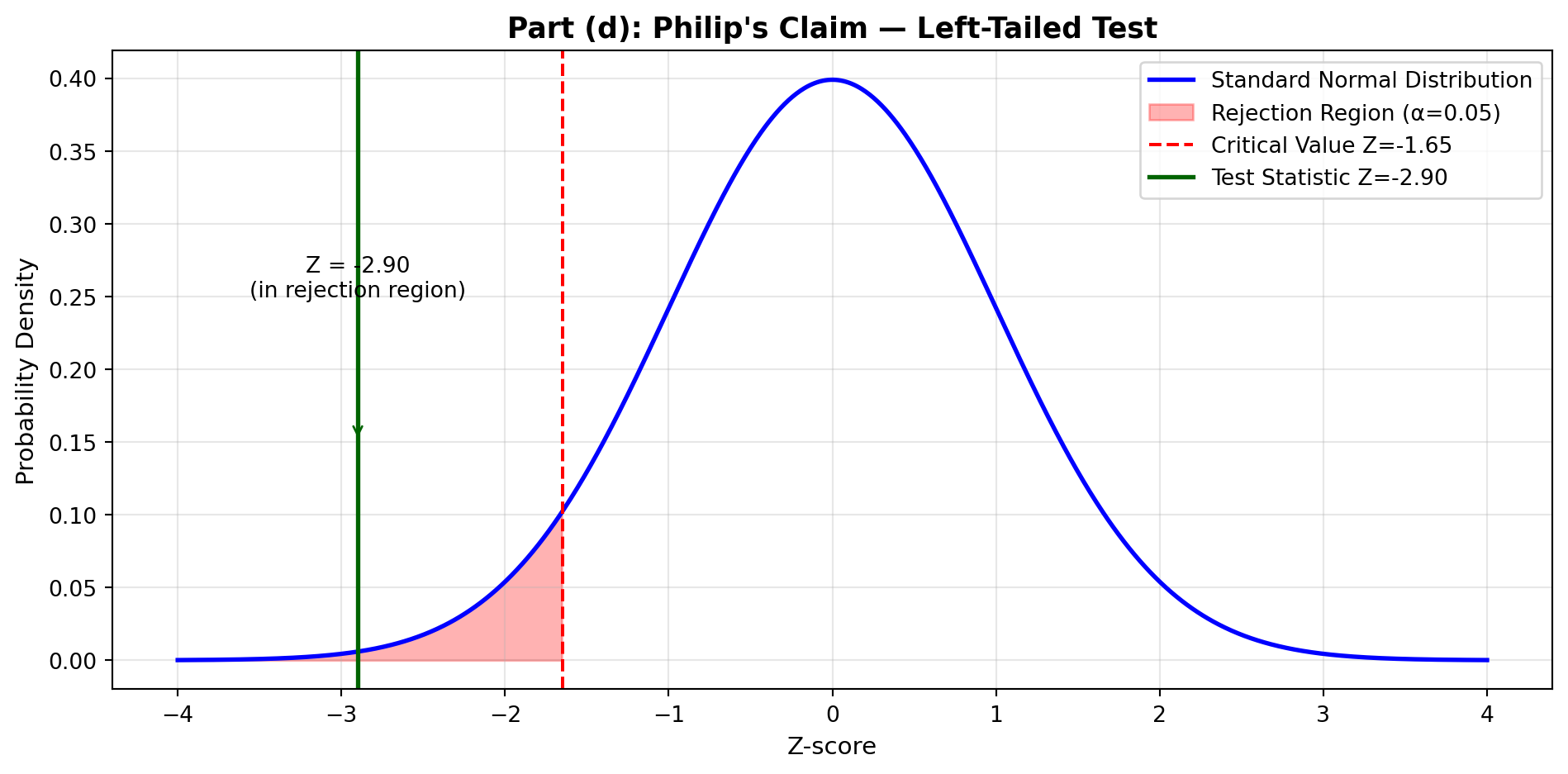

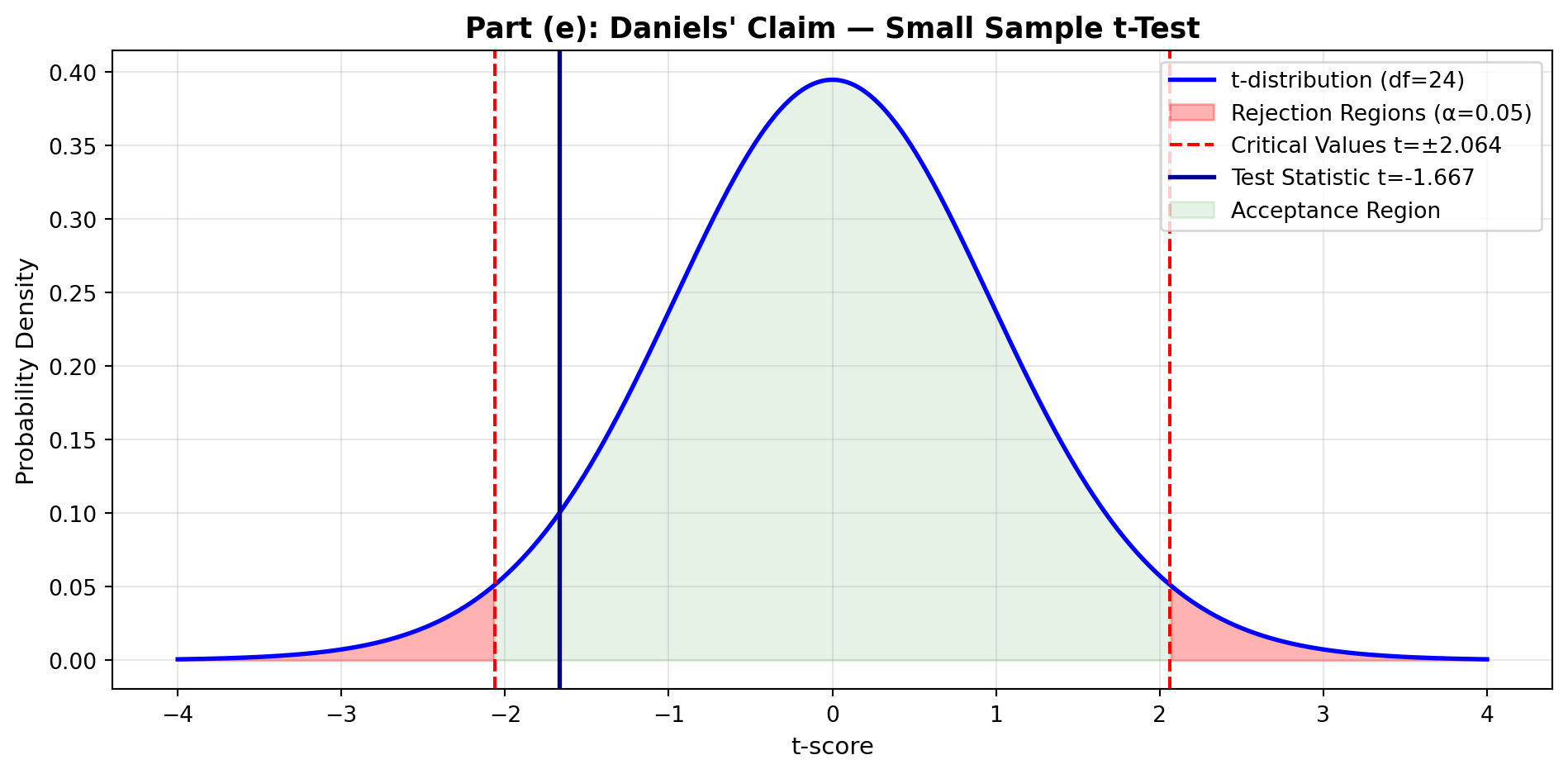

30. Sony PlayStation Sales: In the early 1990s, Sony Corporation introduced its 32-bit PlayStation into the video game market. Management hoped the new product would increase monthly U.S. sales above the $283,000,000 Sony had experienced in the previous decade. A sample of 40 months reported a mean of $297,000,000. Assume a standard deviation of $97,000,000. Test the hypothesis at a 1% significance level. Calculate and interpret the p-value.